2019独角兽企业重金招聘Python工程师标准>>>

安装JDK,设置好环境变量:

下载 hadoop-2.6.5

- http://mirrors.tuna.tsinghua.edu.cn/apache/hadoop/common->下载hadoop-2.6.5.tar.gz

- 解压即可使用

- 放入E:\0_jly\hadoop-2.6.5

- 下载window util for hadoop (几个 dll 文件 放入上述文件)

添加环境变量 HADOOP_HOME

- 并添加到Path路径:%HADOOP_HOME%\bin

创建namenode及datanode目录,用来保存数据,

- 例如

- E:\0_jly\hadoop-2.6.5\namenode

- E:\0_jly\hadoop-2.6.5\datanode

hadoop 相关配置文件设置,涉及到4个主要的配置文件:

- core-site.xml

-

<configuration> <property> <name>fs.defaultFS</name> <value>hdfs://localhost:9000</value> </property> </configuration>

-

- hdfs-site.xml

-

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>/E:/0_jly/hadoop-2.6.5/namenode</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>/E:/0_jly/hadoop-2.6.5/datanode</value> </property> </configuration>

-

- mapped-site.xml

-

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration>

-

- yarn-site.xml

-

<configuration> <!-- Site specific YARN configuration properties --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>1024</value> </property> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>4096</value> </property> <property> <name>yarn.nodemanager.resource.cpu-vcores</name> <value>2</value> </property> </configuration>

-

格式化namenode

- hadoop namenode -format

启动或停止hadoop

- start-all.cmd

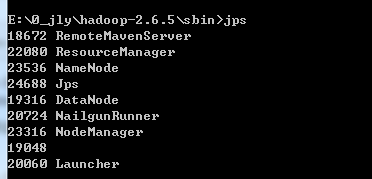

- 第二次启动报错:使用jps发现

- DataNode进程没有启动

- 报错的信息为,namenode clusterID 与 datanode clusterID 不一致!

- 将E:\0_jly\hadoop-2.6.5\data\namenode\current\VERSION内的clusterID

- datanode clusterID改为与namenode clusterID 一致即可

- stop-all.cmd

查看mapreduce job:

- localhost:8088

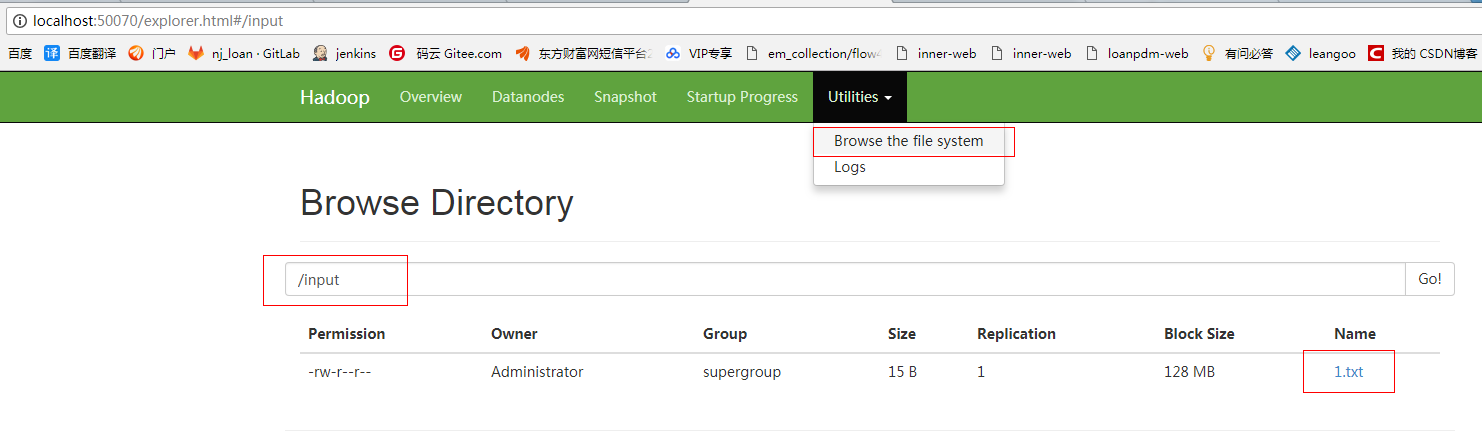

查看hdfs 文件系统:

- localhost:50070

测试hadoop自带的wordcount

-

hdfs dfs -mkdir /input

-

/input 不带 / 放的地方就不是根目录

-

会放到 /user/Administrater/

-

-

-

hdfs dfs -put /E:/BaiduNetdiskDownload/1.txt /input

-

如下图可以看到你上传的文件

-

查看你启动的进程:

- jps

- hadoop jar /E:\0_jly\hadoop-2.6.5\share\hadoop\mapreduce\hadoop-mapreduce-examples-2.6.5.jar wordcount /input /output

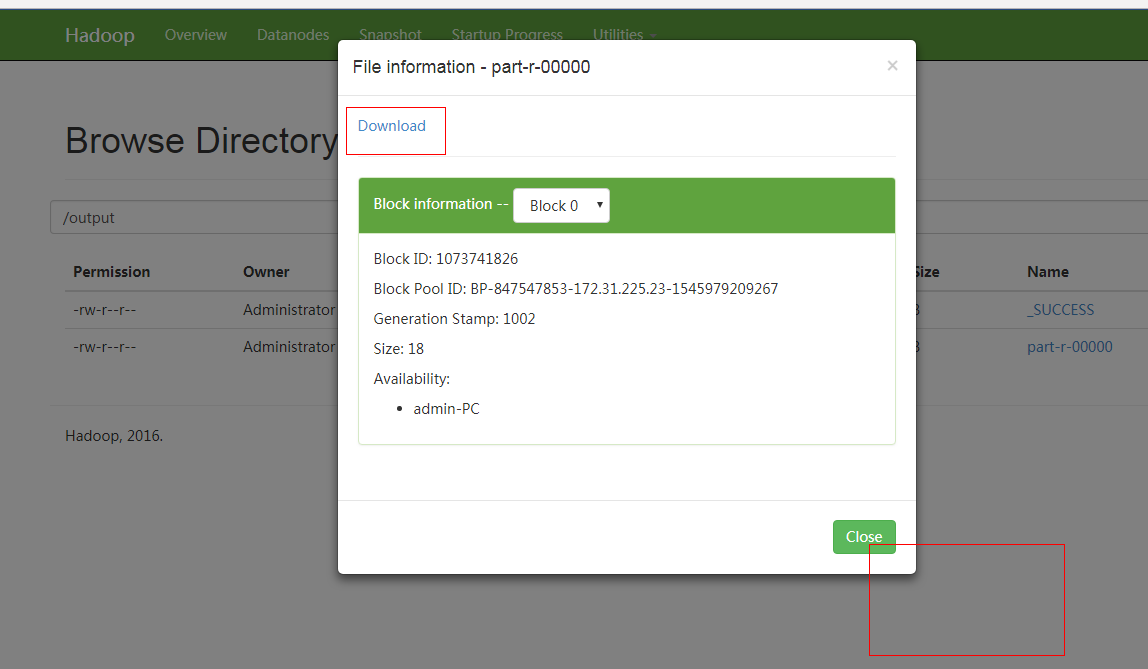

- 运行结果可以下载