DeepGLO

代码:https://github.com/rajatsen91/deepglo

文章主要有三点创新:

- Leveled-Init TCN, 对 TCN 的改进,使用 LeveledInit 方法初始化 TCN 权重,使得 TCN 更好地处理不同尺度的时间序列;

- TCN-MF,用 TCN 来对多变量时间序列预测的矩阵分解法做正则化

- DeepGLO,把矩阵分解法 MF 得到的 包含全局信息的因子序列及其预测值 作为局部序列预测时的协变量。

LeveledInit TCN

从如下代码中可以看出对 TCN 权值初始化部分的改动

class Chomp1d(nn.Module):

def __init__(self, chomp_size):

super(Chomp1d, self).__init__()

self.chomp_size = chomp_size

def forward(self, x):

return x[:, :, : -self.chomp_size].contiguous()

class TemporalBlock(nn.Module):

def __init__(

self,

n_inputs,

n_outputs,

kernel_size,

stride,

dilation,

padding,

dropout=0.1,

init=True,

):

super(TemporalBlock, self).__init__()

self.kernel_size = kernel_size

self.conv1 = weight_norm(

nn.Conv1d(

n_inputs,

n_outputs,

kernel_size,

stride=stride,

padding=padding,

dilation=dilation,

)

)

self.chomp1 = Chomp1d(padding)

self.relu1 = nn.ReLU()

self.dropout1 = nn.Dropout(dropout)

self.conv2 = weight_norm(

nn.Conv1d(

n_outputs,

n_outputs,

kernel_size,

stride=stride,

padding=padding,

dilation=dilation,

)

)

self.chomp2 = Chomp1d(padding)

self.relu2 = nn.ReLU()

self.dropout2 = nn.Dropout(dropout)

self.net = nn.Sequential(

self.conv1,

self.chomp1,

self.relu1,

self.dropout1,

self.conv2,

self.chomp2,

self.relu2,

self.dropout2,

)

self.downsample = (

nn.Conv1d(n_inputs, n_outputs, 1) if n_inputs != n_outputs else None

)

self.init = init

self.relu = nn.ReLU()

self.init_weights()

def init_weights(self):

if self.init:

nn.init.normal_(self.conv1.weight, std=1e-3)

nn.init.normal_(self.conv2.weight, std=1e-3)

self.conv1.weight[:, 0, :] += (

1.0 / self.kernel_size

) ###new initialization scheme

self.conv2.weight += 1.0 / self.kernel_size ###new initialization scheme

nn.init.normal_(self.conv1.bias, std=1e-6)

nn.init.normal_(self.conv2.bias, std=1e-6)

else:

nn.init.xavier_uniform_(self.conv1.weight)

nn.init.xavier_uniform_(self.conv2.weight)

if self.downsample is not None:

self.downsample.weight.data.normal_(0, 0.1)

def forward(self, x):

out = self.net(x)

res = x if self.downsample is None else self.downsample(x)

return self.relu(out + res)

class TemporalBlock_last(nn.Module):

def __init__(

self,

n_inputs,

n_outputs,

kernel_size,

stride,

dilation,

padding,

dropout=0.2,

init=True,

):

super(TemporalBlock_last, self).__init__()

self.kernel_size = kernel_size

self.conv1 = weight_norm(

nn.Conv1d(

n_inputs,

n_outputs,

kernel_size,

stride=stride,

padding=padding,

dilation=dilation,

)

)

self.chomp1 = Chomp1d(padding)

self.relu1 = nn.ReLU()

self.dropout1 = nn.Dropout(dropout)

self.conv2 = weight_norm(

nn.Conv1d(

n_outputs,

n_outputs,

kernel_size,

stride=stride,

padding=padding,

dilation=dilation,

)

)

self.chomp2 = Chomp1d(padding)

self.relu2 = nn.ReLU()

self.dropout2 = nn.Dropout(dropout)

self.net = nn.Sequential(

self.conv1,

self.chomp1,

self.dropout1,

self.conv2,

self.chomp2,

self.dropout2,

)

self.downsample = (

nn.Conv1d(n_inputs, n_outputs, 1) if n_inputs != n_outputs else None

)

self.init = init

self.relu = nn.ReLU()

self.init_weights()

def init_weights(self):

if self.init:

nn.init.normal_(self.conv1.weight, std=1e-3)

nn.init.normal_(self.conv2.weight, std=1e-3)

self.conv1.weight[:, 0, :] += (

1.0 / self.kernel_size

) ###new initialization scheme

self.conv2.weight += 1.0 / self.kernel_size ###new initialization scheme

nn.init.normal_(self.conv1.bias, std=1e-6)

nn.init.normal_(self.conv2.bias, std=1e-6)

else:

nn.init.xavier_uniform_(self.conv1.weight)

nn.init.xavier_uniform_(self.conv2.weight)

if self.downsample is not None:

self.downsample.weight.data.normal_(0, 0.1)

def forward(self, x):

out = self.net(x)

res = x if self.downsample is None else self.downsample(x)

return out + res

class TemporalConvNet(nn.Module):

def __init__(self, num_inputs, num_channels, kernel_size=2, dropout=0.1, init=True):

super(TemporalConvNet, self).__init__()

layers = []

self.num_channels = num_channels

self.num_inputs = num_inputs

self.kernel_size = kernel_size

self.dropout = dropout

num_levels = len(num_channels)

for i in range(num_levels):

dilation_size = 2 ** i

in_channels = num_inputs if i == 0 else num_channels[i - 1]

out_channels = num_channels[i]

if i == num_levels - 1:

layers += [

TemporalBlock_last(

in_channels,

out_channels,

kernel_size,

stride=1,

dilation=dilation_size,

padding=(kernel_size - 1) * dilation_size,

dropout=dropout,

init=init,

)

]

else:

layers += [

TemporalBlock(

in_channels,

out_channels,

kernel_size,

stride=1,

dilation=dilation_size,

padding=(kernel_size - 1) * dilation_size,

dropout=dropout,

init=init,

)

]

self.network = nn.Sequential(*layers)

def forward(self, x):

return self.network(x)

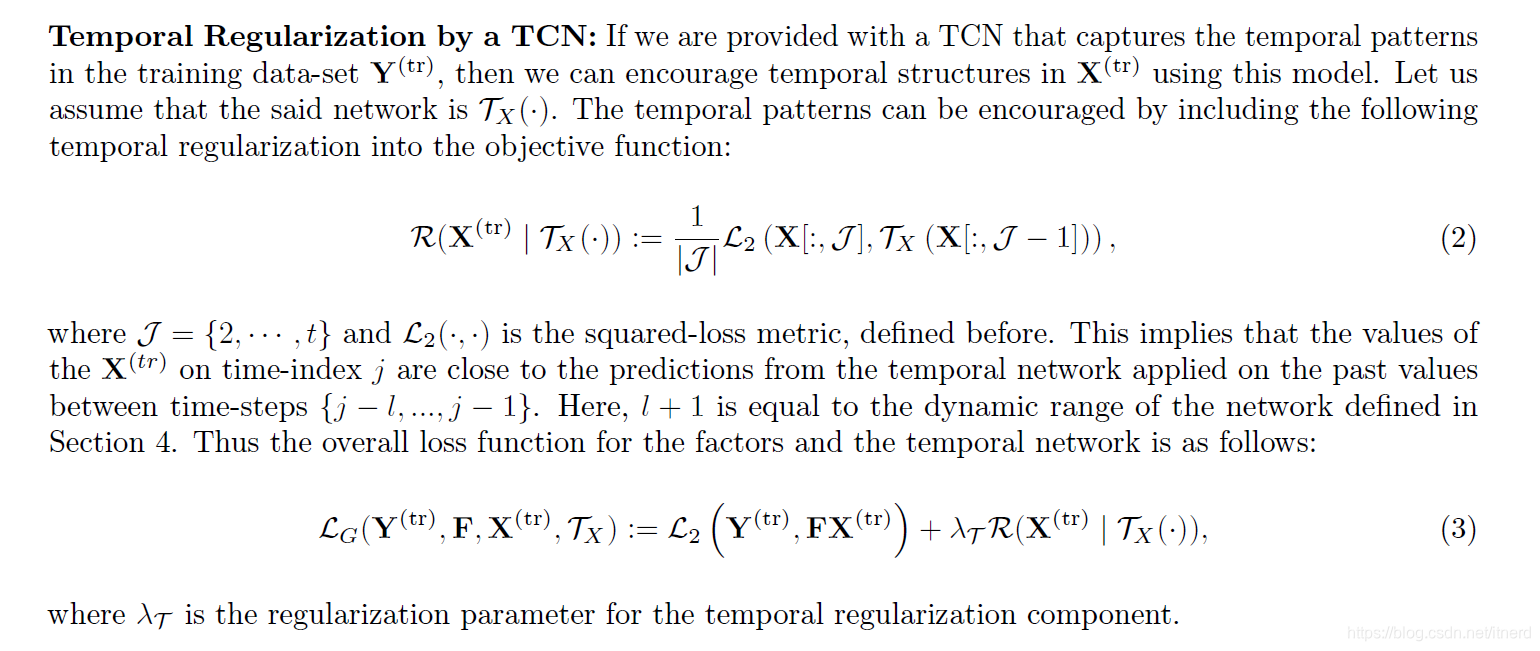

TCN-MF

Temporal Convolution Network regularized Matrix Factorization

本质上和动态因子图是一回事:

一方面假设原始的多变量序列

Y

Y

Y 是某些典型动态序列

X

X

X 的线性组合:

Y

=

F

X

Y = FX

Y=FX

另一方面,

X

X

X 的动态行为可以用 TCN 来建模:

X

t

=

T

X

(

X

t

−

1

)

X_t = \mathbf{\Tau}_X(X_{t-1})

Xt=TX(Xt−1)