深入理解 Kubernetes Ingress:路由流量、负载均衡和安全性配置

Kubernetes Ingress 是 Kubernetes 集群中外部流量管理的重要组件。它为用户提供了一种直观而强大的方式,通过定义规则和配置,来控制外部流量的路由和访问。

1. 什么是 Ingress?

在 Kubernetes 中,Ingress 是一种 API 资源,用于定义外部流量如何进入集群内部。它允许我们基于主机名、路径和其他条件,将流量导向不同的后端服务。简而言之,Ingress 是一个灵活的流量管理工具,使得在集群中运行的多个服务可以共享同一 IP 地址和端口

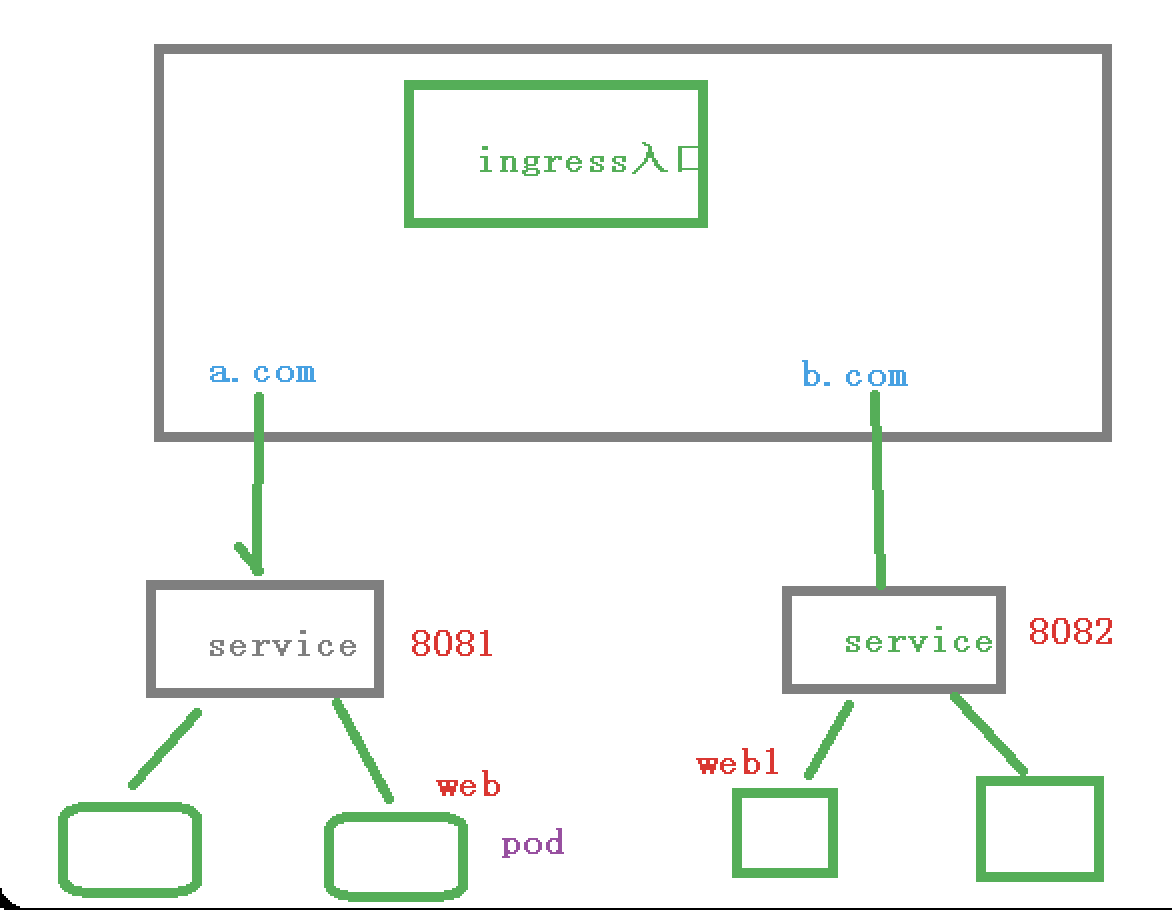

通常情况下pod和service的ip仅仅只能用于集群内部访问,集群外部的请求,通过负载均衡转发到service中的pod上,而且外部访问只能通过NodePort和LoadBalancer来向外暴露端口,所以的话我们访问任何节点都是通过节点ip+暴露端口来访问,这样就意味着,每个节点端口只能使用一次,因为端口不能重复,而实际访问中,都是通过域名来访问服务,所以的话ingress诞生了,它其实也是一个控制器,不过这个控制器比较特殊,相当于网关,在servcie上加了一层,我们先通过ingress然后在访问service,在通过service访问pod中的服务。

2.工作流程

3.安装 ingress-nginx

先通过heml 安装ingress-nginx

3.1安装heml

helm类似于一个包管理工具,主要是为了解决k8s资源编排文件过多难以维护的问题。

使用halm快速部署应用

- 搜索应用:helm search repo nginx

- 安装应用:helm install nginx_name nginx

- 查看已安装应用:helm list

- 查看应用状态:helm status nginx

#下载二进制文件

[root@k8s-master helm]# wget https://get.helm.sh/helm-v3.2.3-linux-amd64.tar.gz

--2024-01-04 17:06:53-- https://get.helm.sh/helm-v3.2.3-linux-amd64.tar.gz

正在解析主机 get.helm.sh (get.helm.sh)... 152.199.39.108, 2606:2800:247:1cb7:261b:1f9c:2074:3c

正在连接 get.helm.sh (get.helm.sh)|152.199.39.108|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 200 OK

长度:12924654 (12M) [application/x-tar]

正在保存至: “helm-v3.2.3-linux-amd64.tar.gz”100%[====================================================================================================================>] 12,924,654 3.87MB/s 用时 3.2s 2024-01-04 17:06:59 (3.87 MB/s) - 已保存 “helm-v3.2.3-linux-amd64.tar.gz” [12924654/12924654])[root@k8s-master helm]# ls

helm-v3.2.3-linux-amd64.tar.gz

#解压

[root@k8s-master helm]# tar -zxvf helm-v3.2.3-linux-amd64.tar.gz

linux-amd64/

linux-amd64/README.md

linux-amd64/LICENSE

linux-amd64/helm

[root@k8s-master helm]# ls

helm-v3.2.3-linux-amd64.tar.gz linux-amd64

[root@k8s-master helm]# cd linux-amd64/

[root@k8s-master linux-amd64]# ls

helm LICENSE README.md

[root@k8s-master linux-amd64]# cp helm /usr/local/bin/

[root@k8s-master linux-amd64]# cd ~

[root@k8s-master ~]# pwd

/root

# 输入版本号 安装成功

[root@k8s-master ~]# helm version

version.BuildInfo{Version:"v3.2.3", GitCommit:"8f832046e258e2cb800894579b1b3b50c2d83492", GitTreeState:"clean", GoVersion:"go1.13.12"}

3.2添加 helm 仓库

可以从helm仓库下载东西

# 安装 ingress-nginx

[root@k8s-master ~]# helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

"ingress-nginx" has been added to your repositories

#查看仓库列表

[root@k8s-master ~]# helm repo list

NAME URL

ingress-nginx https://kubernetes.github.io/ingress-nginx

# 搜索 ingress-nginx 这是配置好的软件的安装包

[root@k8s-master ~]# helm search repo ingress-nginx

NAME CHART VERSION APP VERSION DESCRIPTION

ingress-nginx/ingress-nginx 4.9.0 1.9.5 Ingress controller for Kubernetes using NGINX a...3.3下载包

这个是下载最新的版本

[root@k8s-master ~]# helm pull ingress-nginx/ingress-nginx

#Error: Get https://github.com/kubernetes/ingress-nginx/releases/download/helm-char

#t-4.9.0/ingress-nginx-4.9.0.tgz: unexpected EOF 出现这个问题多下载几次就好了

这个下载了ingress-nginx4.4.2的版本

[root@k8s-master ~]# helm pull ingress-nginx/ingress-nginx --version 4.4.2

[root@k8s-master ~]# ls

aliyun-components.yaml anaconda-ks.cfg deployments ingress-nginx-4.4.2.tgz k8s pods

[root@k8s-master ~]# mv ingress-nginx-4.4.2.tgz k8s/helm

[root@k8s-master ~]# cd k8s/helm

[root@k8s-master helm]# ls

helm-v3.2.3-linux-amd64.tar.gz ingress-nginx-4.4.2.tgz ingress-nginx-4.9.0.tgz linux-amd64

[root@k8s-master helm]# tar -xf ingress-nginx-4.4.2.tgz

[root@k8s-master helm]# ls

helm-v3.2.3-linux-amd64.tar.gz ingress-nginx ingress-nginx-4.4.2.tgz ingress-nginx-4.9.0.tgz linux-amd64

[root@k8s-master helm]# cd ingress-nginx

[root@k8s-master ingress-nginx]# ls

CHANGELOG.md changelog.md.gotmpl Chart.yaml ci OWNERS README.md README.md.gotmpl templates values.yaml

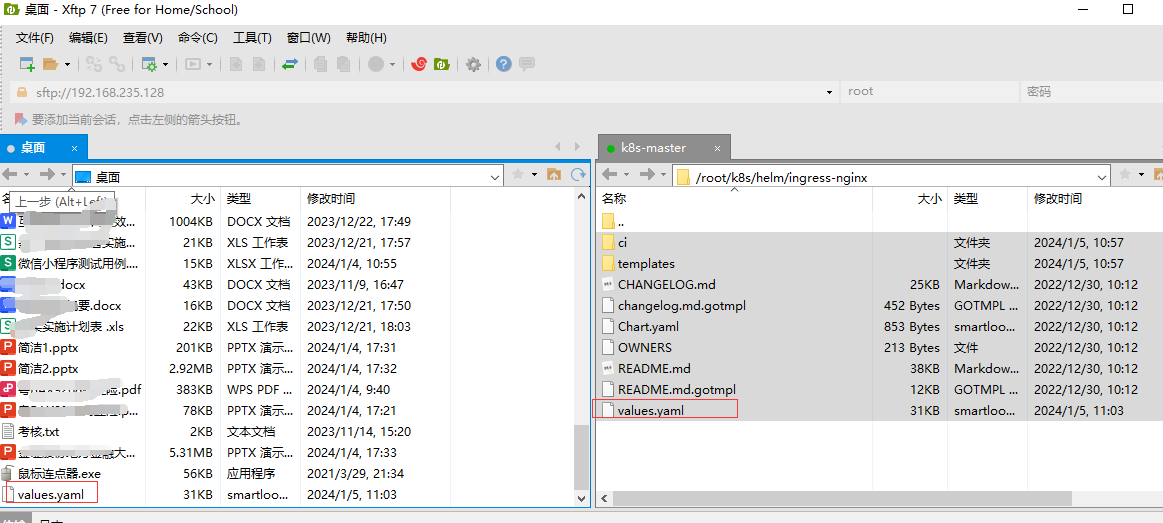

[root@k8s-master ingress-nginx]# vi values.yaml3.4 配置参数

## 修改 values.yaml 镜像地址:修改为国内镜像

[root@k8s-master ingress-nginx]# vi values.yaml#找到这两行修改成这个内容

registry: registry.cn-hangzhou.aliyuncs.com

image: google_containers/nginx-ingress-controller

#这两行内容注释掉#digest: sha256:b3aba22b1da80e7acfc52b115cae1d4c687172cbf2b742d5b502419c25ff340e#digestChroot: sha256:9a8d7b25a846a6461cd044b9aea9cf6cad972bcf2e64d9fd246c0279979aad2d

#/kube-web 找到这行内容 image: ingress-nginx/kube-webhook-certgen

# 修改成下面的内容

registry: registry.cn-hangzhou.aliyuncs.com

image: google_containers/kube-webhook-certgen

#digest 这个也注释掉

tag:1.5.1 #这个上面那个镜像下面的的版本号一致

#修改部署配置的 kind: DaemonSet -- Use a `DaemonSet` or `Deployment`找到这行内容下面

kind: Deployment 把这个改为DaemonSet

#往下找nodeSelector 加入ingress: "true"

nodeSelector:ingress: "true" # 增加选择器,如果 node 上有 ingress=true 就部署

#dnsPolicy: ClusterFirst 把这个集群优先 改为下面的主机映射

dnsPolicy: ClusterFirstWithHostNet

hostNetwork: true #使用本地网络

admissionWebhooks.enabled 修改为 false

#直接找LoadBalancer

service 中的 type 由 LoadBalancer 修改为 ClusterIP,如果服务器是云平台才用 LoadBalancer

参数配置起来比较麻烦 直接用编辑器修改在上传

完整版

## nginx configuration

## Ref: https://github.com/kubernetes/ingress-nginx/blob/main/docs/user-guide/nginx-configuration/index.md

#### Overrides for generated resource names

# See templates/_helpers.tpl

# nameOverride:

# fullnameOverride:## Labels to apply to all resources

##

commonLabels: {}

# scmhash: abc123

# myLabel: aakkmdcontroller:name: controllerimage:## Keep false as default for now!chroot: falseregistry: registry.cn-hangzhou.aliyuncs.comimage: google_containers/nginx-ingress-controller## for backwards compatibility consider setting the full image url via the repository value below## use *either* current default registry/image or repository format or installing chart by providing the values.yaml will fail## repository:tag: "v1.5.1"#digest: sha256:4ba73c697770664c1e00e9f968de14e08f606ff961c76e5d7033a4a9c593c629#digestChroot: sha256:c1c091b88a6c936a83bd7b098662760a87868d12452529bad0d178fb36147345pullPolicy: IfNotPresent# www-data -> uid 101runAsUser: 101allowPrivilegeEscalation: true# -- Use an existing PSP instead of creating oneexistingPsp: ""# -- Configures the controller container namecontainerName: controller# -- Configures the ports that the nginx-controller listens oncontainerPort:http: 80https: 443# -- Will add custom configuration options to Nginx https://kubernetes.github.io/ingress-nginx/user-guide/nginx-configuration/configmap/config: {}# -- Annotations to be added to the controller config configuration configmap.configAnnotations: {}# -- Will add custom headers before sending traffic to backends according to https://github.com/kubernetes/ingress-nginx/tree/main/docs/examples/customization/custom-headersproxySetHeaders: {}# -- Will add custom headers before sending response traffic to the client according to: https://kubernetes.github.io/ingress-nginx/user-guide/nginx-configuration/configmap/#add-headersaddHeaders: {}# -- Optionally customize the pod dnsConfig.dnsConfig: {}# -- Optionally customize the pod hostname.hostname: {}# -- Optionally change this to ClusterFirstWithHostNet in case you have 'hostNetwork: true'.# By default, while using host network, name resolution uses the host's DNS. If you wish nginx-controller# to keep resolving names inside the k8s network, use ClusterFirstWithHostNet.dnsPolicy: ClusterFirstWithHostNet# -- Bare-metal considerations via the host network https://kubernetes.github.io/ingress-nginx/deploy/baremetal/#via-the-host-network# Ingress status was blank because there is no Service exposing the NGINX Ingress controller in a configuration using the host network, the default --publish-service flag used in standard cloud setups does not applyreportNodeInternalIp: false# -- Process Ingress objects without ingressClass annotation/ingressClassName field# Overrides value for --watch-ingress-without-class flag of the controller binary# Defaults to falsewatchIngressWithoutClass: false# -- Process IngressClass per name (additionally as per spec.controller).ingressClassByName: false# -- This configuration defines if Ingress Controller should allow users to set# their own *-snippet annotations, otherwise this is forbidden / dropped# when users add those annotations.# Global snippets in ConfigMap are still respectedallowSnippetAnnotations: true# -- Required for use with CNI based kubernetes installations (such as ones set up by kubeadm),# since CNI and hostport don't mix yet. Can be deprecated once https://github.com/kubernetes/kubernetes/issues/23920# is mergedhostNetwork: true## Use host ports 80 and 443## Disabled by defaulthostPort:# -- Enable 'hostPort' or notenabled: falseports:# -- 'hostPort' http porthttp: 80# -- 'hostPort' https porthttps: 443# -- Election ID to use for status update, by default it uses the controller name combined with a suffix of 'leader'electionID: ""## This section refers to the creation of the IngressClass resource## IngressClass resources are supported since k8s >= 1.18 and required since k8s >= 1.19ingressClassResource:# -- Name of the ingressClassname: nginx# -- Is this ingressClass enabled or notenabled: true# -- Is this the default ingressClass for the clusterdefault: false# -- Controller-value of the controller that is processing this ingressClasscontrollerValue: "k8s.io/ingress-nginx"# -- Parameters is a link to a custom resource containing additional# configuration for the controller. This is optional if the controller# does not require extra parameters.parameters: {}# -- For backwards compatibility with ingress.class annotation, use ingressClass.# Algorithm is as follows, first ingressClassName is considered, if not present, controller looks for ingress.class annotationingressClass: nginx# -- Labels to add to the pod container metadatapodLabels: {}# key: value# -- Security Context policies for controller podspodSecurityContext: {}# -- See https://kubernetes.io/docs/tasks/administer-cluster/sysctl-cluster/ for notes on enabling and using sysctlssysctls: {}# sysctls:# "net.core.somaxconn": "8192"# -- Allows customization of the source of the IP address or FQDN to report# in the ingress status field. By default, it reads the information provided# by the service. If disable, the status field reports the IP address of the# node or nodes where an ingress controller pod is running.publishService:# -- Enable 'publishService' or notenabled: true# -- Allows overriding of the publish service to bind to# Must be <namespace>/<service_name>pathOverride: ""# Limit the scope of the controller to a specific namespacescope:# -- Enable 'scope' or notenabled: false# -- Namespace to limit the controller to; defaults to $(POD_NAMESPACE)namespace: ""# -- When scope.enabled == false, instead of watching all namespaces, we watching namespaces whose labels# only match with namespaceSelector. Format like foo=bar. Defaults to empty, means watching all namespaces.namespaceSelector: ""# -- Allows customization of the configmap / nginx-configmap namespace; defaults to $(POD_NAMESPACE)configMapNamespace: ""tcp:# -- Allows customization of the tcp-services-configmap; defaults to $(POD_NAMESPACE)configMapNamespace: ""# -- Annotations to be added to the tcp config configmapannotations: {}udp:# -- Allows customization of the udp-services-configmap; defaults to $(POD_NAMESPACE)configMapNamespace: ""# -- Annotations to be added to the udp config configmapannotations: {}# -- Maxmind license key to download GeoLite2 Databases.## https://blog.maxmind.com/2019/12/18/significant-changes-to-accessing-and-using-geolite2-databasesmaxmindLicenseKey: ""# -- Additional command line arguments to pass to nginx-ingress-controller# E.g. to specify the default SSL certificate you can useextraArgs: {}## extraArgs:## default-ssl-certificate: "<namespace>/<secret_name>"# -- Additional environment variables to setextraEnvs: []# extraEnvs:# - name: FOO# valueFrom:# secretKeyRef:# key: FOO# name: secret-resource# -- Use a `DaemonSet` or `Deployment`kind: DaemonSet# -- Annotations to be added to the controller Deployment or DaemonSet##annotations: {}# keel.sh/pollSchedule: "@every 60m"# -- Labels to be added to the controller Deployment or DaemonSet and other resources that do not have option to specify labels##labels: {}# keel.sh/policy: patch# keel.sh/trigger: poll# -- The update strategy to apply to the Deployment or DaemonSet##updateStrategy: {}# rollingUpdate:# maxUnavailable: 1# type: RollingUpdate# -- `minReadySeconds` to avoid killing pods before we are ready##minReadySeconds: 0# -- Node tolerations for server scheduling to nodes with taints## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/##tolerations: []# - key: "key"# operator: "Equal|Exists"# value: "value"# effect: "NoSchedule|PreferNoSchedule|NoExecute(1.6 only)"# -- Affinity and anti-affinity rules for server scheduling to nodes## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity##affinity: {}# # An example of preferred pod anti-affinity, weight is in the range 1-100# podAntiAffinity:# preferredDuringSchedulingIgnoredDuringExecution:# - weight: 100# podAffinityTerm:# labelSelector:# matchExpressions:# - key: app.kubernetes.io/name# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/instance# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/component# operator: In# values:# - controller# topologyKey: kubernetes.io/hostname# # An example of required pod anti-affinity# podAntiAffinity:# requiredDuringSchedulingIgnoredDuringExecution:# - labelSelector:# matchExpressions:# - key: app.kubernetes.io/name# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/instance# operator: In# values:# - ingress-nginx# - key: app.kubernetes.io/component# operator: In# values:# - controller# topologyKey: "kubernetes.io/hostname"# -- Topology spread constraints rely on node labels to identify the topology domain(s) that each Node is in.## Ref: https://kubernetes.io/docs/concepts/workloads/pods/pod-topology-spread-constraints/##topologySpreadConstraints: []# - maxSkew: 1# topologyKey: topology.kubernetes.io/zone# whenUnsatisfiable: DoNotSchedule# labelSelector:# matchLabels:# app.kubernetes.io/instance: ingress-nginx-internal# -- `terminationGracePeriodSeconds` to avoid killing pods before we are ready## wait up to five minutes for the drain of connections##terminationGracePeriodSeconds: 300# -- Node labels for controller pod assignment## Ref: https://kubernetes.io/docs/user-guide/node-selection/##nodeSelector:kubernetes.io/os: linux## Liveness and readiness probe values## Ref: https://kubernetes.io/docs/concepts/workloads/pods/pod-lifecycle/#container-probes#### startupProbe:## httpGet:## # should match container.healthCheckPath## path: "/healthz"## port: 10254## scheme: HTTP## initialDelaySeconds: 5## periodSeconds: 5## timeoutSeconds: 2## successThreshold: 1## failureThreshold: 5livenessProbe:httpGet:# should match container.healthCheckPathpath: "/healthz"port: 10254scheme: HTTPinitialDelaySeconds: 10periodSeconds: 10timeoutSeconds: 1successThreshold: 1failureThreshold: 5readinessProbe:httpGet:# should match container.healthCheckPathpath: "/healthz"port: 10254scheme: HTTPinitialDelaySeconds: 10periodSeconds: 10timeoutSeconds: 1successThreshold: 1failureThreshold: 3# -- Path of the health check endpoint. All requests received on the port defined by# the healthz-port parameter are forwarded internally to this path.healthCheckPath: "/healthz"# -- Address to bind the health check endpoint.# It is better to set this option to the internal node address# if the ingress nginx controller is running in the `hostNetwork: true` mode.healthCheckHost: ""# -- Annotations to be added to controller pods##podAnnotations: {}replicaCount: 1# -- Define either 'minAvailable' or 'maxUnavailable', never both.minAvailable: 1# -- Define either 'minAvailable' or 'maxUnavailable', never both.# maxUnavailable: 1## Define requests resources to avoid probe issues due to CPU utilization in busy nodes## ref: https://github.com/kubernetes/ingress-nginx/issues/4735#issuecomment-551204903## Ideally, there should be no limits.## https://engineering.indeedblog.com/blog/2019/12/cpu-throttling-regression-fix/resources:## limits:## cpu: 100m## memory: 90Mirequests:cpu: 100mmemory: 90Mi# Mutually exclusive with keda autoscalingautoscaling:apiVersion: autoscaling/v2enabled: falseannotations: {}minReplicas: 1maxReplicas: 11targetCPUUtilizationPercentage: 50targetMemoryUtilizationPercentage: 50behavior: {}# scaleDown:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 1# periodSeconds: 180# scaleUp:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 2# periodSeconds: 60autoscalingTemplate: []# Custom or additional autoscaling metrics# ref: https://kubernetes.io/docs/tasks/run-application/horizontal-pod-autoscale/#support-for-custom-metrics# - type: Pods# pods:# metric:# name: nginx_ingress_controller_nginx_process_requests_total# target:# type: AverageValue# averageValue: 10000m# Mutually exclusive with hpa autoscalingkeda:apiVersion: "keda.sh/v1alpha1"## apiVersion changes with keda 1.x vs 2.x## 2.x = keda.sh/v1alpha1## 1.x = keda.k8s.io/v1alpha1enabled: falseminReplicas: 1maxReplicas: 11pollingInterval: 30cooldownPeriod: 300restoreToOriginalReplicaCount: falsescaledObject:annotations: {}# Custom annotations for ScaledObject resource# annotations:# key: valuetriggers: []# - type: prometheus# metadata:# serverAddress: http://<prometheus-host>:9090# metricName: http_requests_total# threshold: '100'# query: sum(rate(http_requests_total{deployment="my-deployment"}[2m]))behavior: {}# scaleDown:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 1# periodSeconds: 180# scaleUp:# stabilizationWindowSeconds: 300# policies:# - type: Pods# value: 2# periodSeconds: 60# -- Enable mimalloc as a drop-in replacement for malloc.## ref: https://github.com/microsoft/mimalloc##enableMimalloc: true## Override NGINX templatecustomTemplate:configMapName: ""configMapKey: ""service:enabled: true# -- If enabled is adding an appProtocol option for Kubernetes service. An appProtocol field replacing annotations that were# using for setting a backend protocol. Here is an example for AWS: service.beta.kubernetes.io/aws-load-balancer-backend-protocol: http# It allows choosing the protocol for each backend specified in the Kubernetes service.# See the following GitHub issue for more details about the purpose: https://github.com/kubernetes/kubernetes/issues/40244# Will be ignored for Kubernetes versions older than 1.20##appProtocol: trueannotations: {}labels: {}# clusterIP: ""# -- List of IP addresses at which the controller services are available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []# -- Used by cloud providers to connect the resulting `LoadBalancer` to a pre-existing static IP according to https://kubernetes.io/docs/concepts/services-networking/service/#loadbalancerloadBalancerIP: ""loadBalancerSourceRanges: []enableHttp: trueenableHttps: true## Set external traffic policy to: "Local" to preserve source IP on providers supporting it.## Ref: https://kubernetes.io/docs/tutorials/services/source-ip/#source-ip-for-services-with-typeloadbalancer# externalTrafficPolicy: ""## Must be either "None" or "ClientIP" if set. Kubernetes will default to "None".## Ref: https://kubernetes.io/docs/concepts/services-networking/service/#virtual-ips-and-service-proxies# sessionAffinity: ""## Specifies the health check node port (numeric port number) for the service. If healthCheckNodePort isn’t specified,## the service controller allocates a port from your cluster’s NodePort range.## Ref: https://kubernetes.io/docs/tasks/access-application-cluster/create-external-load-balancer/#preserving-the-client-source-ip# healthCheckNodePort: 0# -- Represents the dual-stack-ness requested or required by this Service. Possible values are# SingleStack, PreferDualStack or RequireDualStack.# The ipFamilies and clusterIPs fields depend on the value of this field.## Ref: https://kubernetes.io/docs/concepts/services-networking/dual-stack/ipFamilyPolicy: "SingleStack"# -- List of IP families (e.g. IPv4, IPv6) assigned to the service. This field is usually assigned automatically# based on cluster configuration and the ipFamilyPolicy field.## Ref: https://kubernetes.io/docs/concepts/services-networking/dual-stack/ipFamilies:- IPv4ports:http: 80https: 443targetPorts:http: httphttps: httpstype: ClusterIP## type: NodePort## nodePorts:## http: 32080## https: 32443## tcp:## 8080: 32808nodePorts:http: ""https: ""tcp: {}udp: {}external:enabled: trueinternal:# -- Enables an additional internal load balancer (besides the external one).enabled: false# -- Annotations are mandatory for the load balancer to come up. Varies with the cloud service.annotations: {}# loadBalancerIP: ""# -- Restrict access For LoadBalancer service. Defaults to 0.0.0.0/0.loadBalancerSourceRanges: []## Set external traffic policy to: "Local" to preserve source IP on## providers supporting it## Ref: https://kubernetes.io/docs/tutorials/services/source-ip/#source-ip-for-services-with-typeloadbalancer# externalTrafficPolicy: ""# shareProcessNamespace enables process namespace sharing within the pod.# This can be used for example to signal log rotation using `kill -USR1` from a sidecar.shareProcessNamespace: false# -- Additional containers to be added to the controller pod.# See https://github.com/lemonldap-ng-controller/lemonldap-ng-controller as example.extraContainers: []# - name: my-sidecar# image: nginx:latest# - name: lemonldap-ng-controller# image: lemonldapng/lemonldap-ng-controller:0.2.0# args:# - /lemonldap-ng-controller# - --alsologtostderr# - --configmap=$(POD_NAMESPACE)/lemonldap-ng-configuration# env:# - name: POD_NAME# valueFrom:# fieldRef:# fieldPath: metadata.name# - name: POD_NAMESPACE# valueFrom:# fieldRef:# fieldPath: metadata.namespace# volumeMounts:# - name: copy-portal-skins# mountPath: /srv/var/lib/lemonldap-ng/portal/skins# -- Additional volumeMounts to the controller main container.extraVolumeMounts: []# - name: copy-portal-skins# mountPath: /var/lib/lemonldap-ng/portal/skins# -- Additional volumes to the controller pod.extraVolumes: []# - name: copy-portal-skins# emptyDir: {}# -- Containers, which are run before the app containers are started.extraInitContainers: []# - name: init-myservice# image: busybox# command: ['sh', '-c', 'until nslookup myservice; do echo waiting for myservice; sleep 2; done;']# -- Modules, which are mounted into the core nginx image. See values.yaml for a sample to add opentelemetry moduleextraModules: []# containerSecurityContext:# allowPrivilegeEscalation: false## The image must contain a `/usr/local/bin/init_module.sh` executable, which# will be executed as initContainers, to move its config files within the# mounted volume.opentelemetry:enabled: falseimage: registry.k8s.io/ingress-nginx/opentelemetry:v20221114-controller-v1.5.1-6-ga66ee73c5@sha256:41076fd9fb4255677c1a3da1ac3fc41477f06eba3c7ebf37ffc8f734dad51d7ccontainerSecurityContext:allowPrivilegeEscalation: falseadmissionWebhooks:annotations: {}# ignore-check.kube-linter.io/no-read-only-rootfs: "This deployment needs write access to root filesystem".## Additional annotations to the admission webhooks.## These annotations will be added to the ValidatingWebhookConfiguration and## the Jobs Spec of the admission webhooks.enabled: false# -- Additional environment variables to setextraEnvs: []# extraEnvs:# - name: FOO# valueFrom:# secretKeyRef:# key: FOO# name: secret-resource# -- Admission Webhook failure policy to usefailurePolicy: Fail# timeoutSeconds: 10port: 8443certificate: "/usr/local/certificates/cert"key: "/usr/local/certificates/key"namespaceSelector: {}objectSelector: {}# -- Labels to be added to admission webhookslabels: {}# -- Use an existing PSP instead of creating oneexistingPsp: ""networkPolicyEnabled: falseservice:annotations: {}# clusterIP: ""externalIPs: []# loadBalancerIP: ""loadBalancerSourceRanges: []servicePort: 443type: ClusterIPcreateSecretJob:securityContext:allowPrivilegeEscalation: falseresources: {}# limits:# cpu: 10m# memory: 20Mi# requests:# cpu: 10m# memory: 20MipatchWebhookJob:securityContext:allowPrivilegeEscalation: falseresources: {}patch:enabled: trueimage:registry: registry.cn-hangzhou.aliyuncs.comimage: google_containers/kube-webhook-certgen## for backwards compatibility consider setting the full image url via the repository value below## use *either* current default registry/image or repository format or installing chart by providing the values.yaml will fail## repository:tag: v1.5.1#digest: sha256:39c5b2e3310dc4264d638ad28d9d1d96c4cbb2b2dcfb52368fe4e3c63f61e10fpullPolicy: IfNotPresent# -- Provide a priority class name to the webhook patching job##priorityClassName: ""podAnnotations: {}nodeSelector:kubernetes.io/os: linuxtolerations: []# -- Labels to be added to patch job resourceslabels: {}securityContext:runAsNonRoot: truerunAsUser: 2000fsGroup: 2000# Use certmanager to generate webhook certscertManager:enabled: false# self-signed root certificaterootCert:duration: "" # default to be 5yadmissionCert:duration: "" # default to be 1y# issuerRef:# name: "issuer"# kind: "ClusterIssuer"metrics:port: 10254portName: metrics# if this port is changed, change healthz-port: in extraArgs: accordinglyenabled: falseservice:annotations: {}# prometheus.io/scrape: "true"# prometheus.io/port: "10254"# clusterIP: ""# -- List of IP addresses at which the stats-exporter service is available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []# loadBalancerIP: ""loadBalancerSourceRanges: []servicePort: 10254type: ClusterIP# externalTrafficPolicy: ""# nodePort: ""serviceMonitor:enabled: falseadditionalLabels: {}## The label to use to retrieve the job name from.## jobLabel: "app.kubernetes.io/name"namespace: ""namespaceSelector: {}## Default: scrape .Release.Namespace only## To scrape all, use the following:## namespaceSelector:## any: truescrapeInterval: 30s# honorLabels: truetargetLabels: []relabelings: []metricRelabelings: []prometheusRule:enabled: falseadditionalLabels: {}# namespace: ""rules: []# # These are just examples rules, please adapt them to your needs# - alert: NGINXConfigFailed# expr: count(nginx_ingress_controller_config_last_reload_successful == 0) > 0# for: 1s# labels:# severity: critical# annotations:# description: bad ingress config - nginx config test failed# summary: uninstall the latest ingress changes to allow config reloads to resume# - alert: NGINXCertificateExpiry# expr: (avg(nginx_ingress_controller_ssl_expire_time_seconds) by (host) - time()) < 604800# for: 1s# labels:# severity: critical# annotations:# description: ssl certificate(s) will expire in less then a week# summary: renew expiring certificates to avoid downtime# - alert: NGINXTooMany500s# expr: 100 * ( sum( nginx_ingress_controller_requests{status=~"5.+"} ) / sum(nginx_ingress_controller_requests) ) > 5# for: 1m# labels:# severity: warning# annotations:# description: Too many 5XXs# summary: More than 5% of all requests returned 5XX, this requires your attention# - alert: NGINXTooMany400s# expr: 100 * ( sum( nginx_ingress_controller_requests{status=~"4.+"} ) / sum(nginx_ingress_controller_requests) ) > 5# for: 1m# labels:# severity: warning# annotations:# description: Too many 4XXs# summary: More than 5% of all requests returned 4XX, this requires your attention# -- Improve connection draining when ingress controller pod is deleted using a lifecycle hook:# With this new hook, we increased the default terminationGracePeriodSeconds from 30 seconds# to 300, allowing the draining of connections up to five minutes.# If the active connections end before that, the pod will terminate gracefully at that time.# To effectively take advantage of this feature, the Configmap feature# worker-shutdown-timeout new value is 240s instead of 10s.##lifecycle:preStop:exec:command:- /wait-shutdownpriorityClassName: ""# -- Rollback limit

##

revisionHistoryLimit: 10## Default 404 backend

##

defaultBackend:##enabled: falsename: defaultbackendimage:registry: registry.k8s.ioimage: defaultbackend-amd64## for backwards compatibility consider setting the full image url via the repository value below## use *either* current default registry/image or repository format or installing chart by providing the values.yaml will fail## repository:tag: "1.5"pullPolicy: IfNotPresent# nobody user -> uid 65534runAsUser: 65534runAsNonRoot: truereadOnlyRootFilesystem: trueallowPrivilegeEscalation: false# -- Use an existing PSP instead of creating oneexistingPsp: ""extraArgs: {}serviceAccount:create: truename: ""automountServiceAccountToken: true# -- Additional environment variables to set for defaultBackend podsextraEnvs: []port: 8080## Readiness and liveness probes for default backend## Ref: https://kubernetes.io/docs/tasks/configure-pod-container/configure-liveness-readiness-probes/##livenessProbe:failureThreshold: 3initialDelaySeconds: 30periodSeconds: 10successThreshold: 1timeoutSeconds: 5readinessProbe:failureThreshold: 6initialDelaySeconds: 0periodSeconds: 5successThreshold: 1timeoutSeconds: 5# -- Node tolerations for server scheduling to nodes with taints## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/##tolerations: []# - key: "key"# operator: "Equal|Exists"# value: "value"# effect: "NoSchedule|PreferNoSchedule|NoExecute(1.6 only)"affinity: {}# -- Security Context policies for controller pods# See https://kubernetes.io/docs/tasks/administer-cluster/sysctl-cluster/ for# notes on enabling and using sysctls##podSecurityContext: {}# -- Security Context policies for controller main container.# See https://kubernetes.io/docs/tasks/administer-cluster/sysctl-cluster/ for# notes on enabling and using sysctls##containerSecurityContext: {}# -- Labels to add to the pod container metadatapodLabels: {}# key: value# -- Node labels for default backend pod assignment## Ref: https://kubernetes.io/docs/user-guide/node-selection/##nodeSelector:kubernetes.io/os: linuxingress: "true"# -- Annotations to be added to default backend pods##podAnnotations: {}replicaCount: 1minAvailable: 1resources: {}# limits:# cpu: 10m# memory: 20Mi# requests:# cpu: 10m# memory: 20MiextraVolumeMounts: []## Additional volumeMounts to the default backend container.# - name: copy-portal-skins# mountPath: /var/lib/lemonldap-ng/portal/skinsextraVolumes: []## Additional volumes to the default backend pod.# - name: copy-portal-skins# emptyDir: {}autoscaling:annotations: {}enabled: falseminReplicas: 1maxReplicas: 2targetCPUUtilizationPercentage: 50targetMemoryUtilizationPercentage: 50service:annotations: {}# clusterIP: ""# -- List of IP addresses at which the default backend service is available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []# loadBalancerIP: ""loadBalancerSourceRanges: []servicePort: 80type: ClusterIPpriorityClassName: ""# -- Labels to be added to the default backend resourceslabels: {}## Enable RBAC as per https://github.com/kubernetes/ingress-nginx/blob/main/docs/deploy/rbac.md and https://github.com/kubernetes/ingress-nginx/issues/266

rbac:create: truescope: false## If true, create & use Pod Security Policy resources

## https://kubernetes.io/docs/concepts/policy/pod-security-policy/

podSecurityPolicy:enabled: falseserviceAccount:create: truename: ""automountServiceAccountToken: true# -- Annotations for the controller service accountannotations: {}# -- Optional array of imagePullSecrets containing private registry credentials

## Ref: https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

imagePullSecrets: []

# - name: secretName# -- TCP service key-value pairs

## Ref: https://github.com/kubernetes/ingress-nginx/blob/main/docs/user-guide/exposing-tcp-udp-services.md

##

tcp: {}

# 8080: "default/example-tcp-svc:9000"# -- UDP service key-value pairs

## Ref: https://github.com/kubernetes/ingress-nginx/blob/main/docs/user-guide/exposing-tcp-udp-services.md

##

udp: {}

# 53: "kube-system/kube-dns:53"# -- Prefix for TCP and UDP ports names in ingress controller service

## Some cloud providers, like Yandex Cloud may have a requirements for a port name regex to support cloud load balancer integration

portNamePrefix: ""# -- (string) A base64-encoded Diffie-Hellman parameter.

# This can be generated with: `openssl dhparam 4096 2> /dev/null | base64`

## Ref: https://github.com/kubernetes/ingress-nginx/tree/main/docs/examples/customization/ssl-dh-param

dhParam:3 .5使用ingress

3.5.1 创建命名空间

# 为 ingress 专门创建一个 namespace

[root@k8s-master ingress-nginx]# kubectl create ns ingress-nginx

namespace/ingress-nginx created

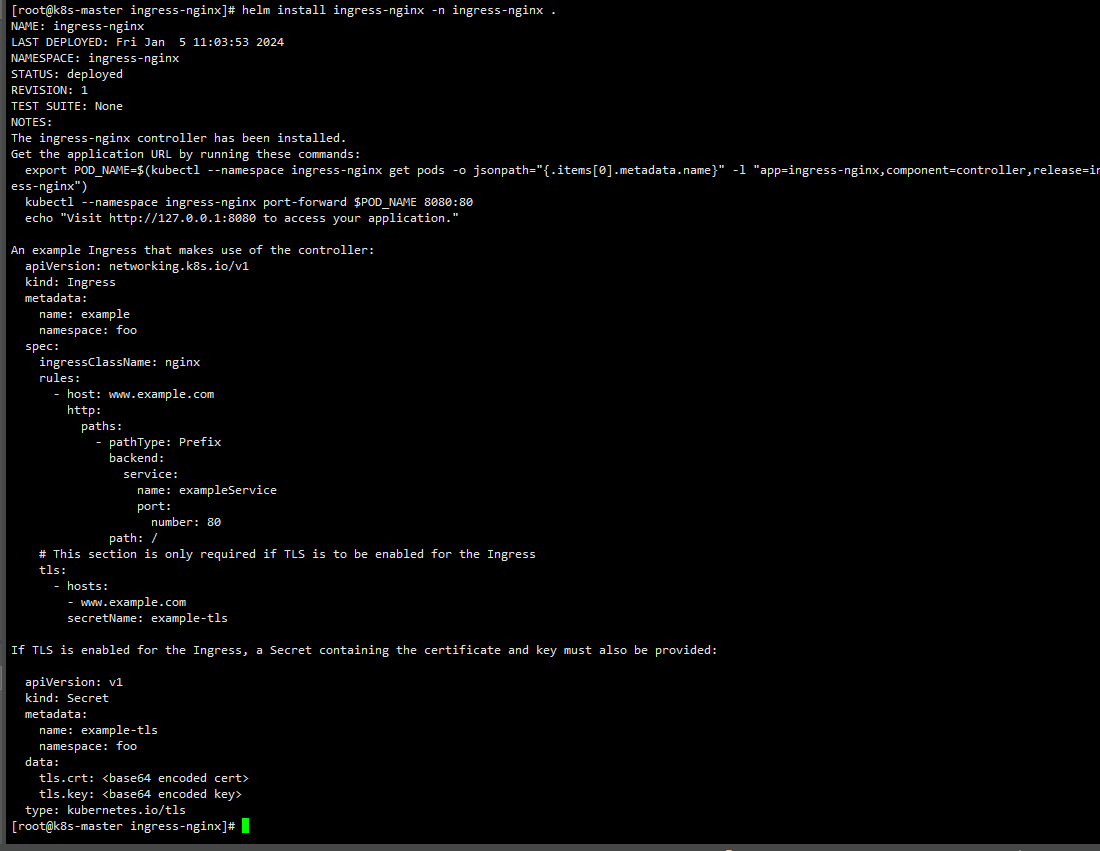

3.5.2安装 ingress-nginx

# 为需要部署 ingress 的节点上加标签

[root@k8s-master ingress-nginx]# kubectl label node k8s-master ingress=true

node/k8s-master labeled# 安装 ingress-nginx

helm install ingress-nginx -n ingress-nginx .

这样就算安装成功了

3.5.3 创建一个暴露Service 集群内部访问的Service

#创建容器

kubectl create deployment nginx-service --image=nginx# 暴露Service

[root@master ~]# kubectl expose deployment nginx-service --port=80 --target-port=80 --type=NodePort#查看service

[root@k8s-master ingress]# kubectl get service nginx-service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-service ClusterIP 10.104.195.8 <none> 80/TCP 8d

3.5.4Http代理

[root@k8s-master k8s]# mkdir ingress

[root@k8s-master ingress]# vi wolfcide-ingress.yaml

文件内容如下:

apiVersion: networking.k8s.io/v1

kind: Ingress # 资源类型为 Ingress

metadata:name: wolfcode-nginx-ingressannotations:kubernetes.io/ingress.class: "nginx"nginx.ingress.kubernetes.io/rewrite-target: /

spec:rules: # ingress 规则配置,可以配置多个- host: k8s.wolfcode.cn # 域名配置,可以使用通配符 *http:paths: # 相当于 nginx 的 location 配置,可以配置多个- pathType: Prefix # 路径类型,按照路径类型进行匹配 ImplementationSpecific 需要指定 IngressClass,具体匹配规则以 IngressClass 中的规则为准。

Exact:精确匹配,URL需要与path完全匹配上,且区分大小写的。Prefix:以 / 作为分隔符来进行前缀匹配backend:service:name: nginx-service # 代理到哪个 serviceport:number: 80 # service 的端口path: /api # 等价于 nginx 中的 location 的路径前缀匹配#创建Ingress

[root@k8s-master ingress]# kubectl create -f wolfcide-ingress.yaml

ingress.networking.k8s.io/wolfcode-nginx-ingress created#查看

[root@k8s-master ingress]# kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

wolfcode-nginx-ingress <none> k8s.wolfcode.cn 10.102.1.91 80 116s

#查看运行详细信息

[root@k8s-master ingress]# kubectl get po -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

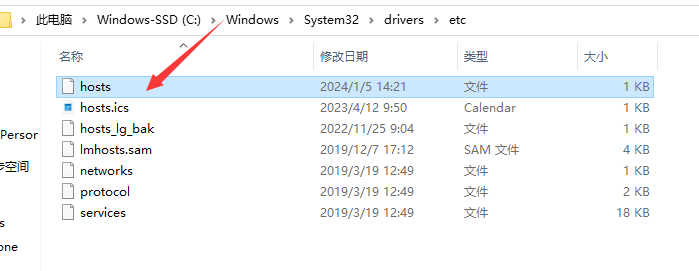

ingress-nginx-controller-zfvqg 1/1 Running 0 3h19m 192.168.235.129 k8s-node1 <none> 修改目标目录下hosts文件

加入以下内容:

192.168.235.129 k8s.wolfcode.cn

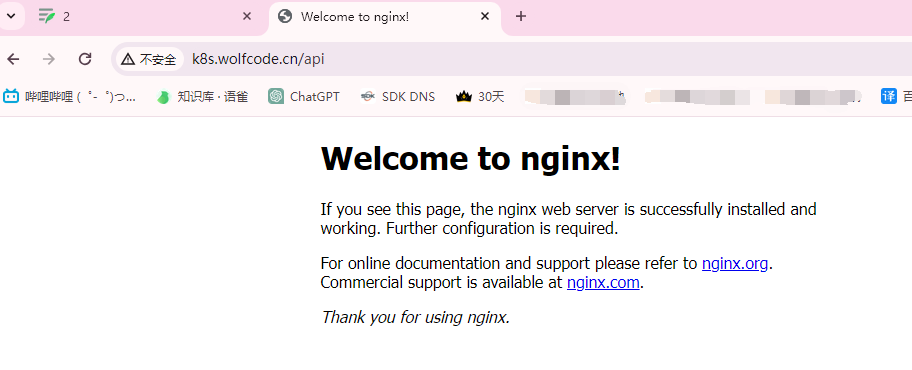

访问成功

输入http://k8s.wolfcode.cn/api 访问成功

多域名匹配: 按照不同主机不同域名进行匹配

apiVersion: networking.k8s.io/v1

kind: Ingress # 资源类型为 Ingress

metadata:name: wolfcode-nginx-ingressannotations:kubernetes.io/ingress.class: "nginx"nginx.ingress.kubernetes.io/rewrite-target: /

spec:rules: # ingress 规则配置,可以配置多个- host: k8s.wolfcode.cn # 域名配置,可以使用通配符 *http:paths: # 相当于 nginx 的 location 配置,可以配置多个- pathType: Prefix # 路径类型,按照路径类型进行匹配 ImplementationSpecific 需要指定 IngressClass,具体匹配规则以 IngressClass 中的规则为准。Exact:精确匹配,URL需要与path完全匹配上,且区分大小写的。Prefix:以 / 作为分隔符来进行前缀匹配backend:service: name: nginx-svc # 代理到哪个 serviceport: number: 80 # service 的端口path: /api # 等价于 nginx 中的 location 的路径前缀匹配- pathType: Exec # 路径类型,按照路径类型进行匹配 ImplementationSpecific 需要指定 IngressClass,具体匹配规则以 IngressClass 中的规则为准。Exact:精确匹配>,URL需要与path完全匹配上,且区分大小写的。Prefix:以 / 作为分隔符来进行前缀匹配backend:service:name: nginx-svc # 代理到哪个 serviceport:number: 80 # service 的端口path: /- host: api.wolfcode.cn # 域名配置,可以使用通配符 *http:paths: # 相当于 nginx 的 location 配置,可以配置多个- pathType: Prefix # 路径类型,按照路径类型进行匹配 ImplementationSpecific 需要指定 IngressClass,具体匹配规则以 IngressClass 中的规则为准。Exact:精确匹配>,URL需要与path完全匹配上,且区分大小写的。Prefix:以 / 作为分隔符来进行前缀匹配backend:service:name: nginx-svc # 代理到哪个 serviceport:number: 80 # service 的端口path: /

在你的 Ingress 配置中,如果你想要匹配路径并传递参数给后端服务,你可以在 nginx.ingress.kubernetes.io/rewrite-target 注解中设置重写目标,并使用捕获组(capture group)来捕获路径中的参数。

在你的例子中,如果你想要匹配 /api/some-parameter 这样的路径,并将 some-parameter 作为参数传递给后端服务,可以按照以下方式配置:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:name: wolfcode-nginx-ingressannotations:kubernetes.io/ingress.class: "nginx"nginx.ingress.kubernetes.io/rewrite-target: /$2

spec:rules:- host: k8s.wolfcode.cnhttp:paths:- pathType: Prefixpath: /api/(.*)backend:service:name: nginx-serviceport:number: 80在上面的配置中,nginx.ingress.kubernetes.io/rewrite-target: /$2 使用了 $2 作为捕获组的引用,这表示将匹配到的路径中的参数(some-parameter)传递给后端服务。

请注意,这里的正则表达式 /(.*) 中的 (.*) 是一个捕获组,捕获任意字符,你可以根据实际需要进行调整。在重写目标中,/$2 表示引用第二个捕获组的内容。这将重写目标路径为 /some-parameter,并将其传递给后端服务。

确保在配置中使用正确的正则表达式和捕获组,以匹配和传递你期望的路径参数。

3.5.5 Https代理

删除所有ingress命令

kubectl delete ingress --all1.创建证书

# 生成证书

[root@k8s-master ingress]# openssl req -x509 -sha256 -nodes -days 365 -newkey rsa:2048 -keyout tls.key -out tls.crt -subj "/C=CN/ST=BJ/L=BJ/O=nginx/CN=itheima.com"

Generating a 2048 bit RSA private key

..............................................................................................+++

............................................................................+++

writing new private key to 'tls.key'

-----

# 创建密钥

[root@k8s-master ingress]# kubectl create secret tls tls-secret --key tls.key --cert tls.crt

secret/tls-secret created2.创建并配置ingress-https.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:name: wolfcode-nginx-ingress-httpsannotations:kubernetes.io/ingress.class: "nginx"nginx.ingress.kubernetes.io/rewrite-target: /

spec:tls:- hosts:- nginx.itheima.comsecretName: tls-secretrules:- host: nginx.itheima.comhttp:paths:- pathType: Prefixpath: /backend:service:name: nginx-serviceport:number: 80#创建 Ingress 资源

[root@k8s-master ingress]# kubectl create -f ingress-https.yaml

ingress.networking.k8s.io/wolfcode-nginx-ingress-https created

#验证 Ingress:使用以下命令检查 Ingress 是否成功创建:

#确保 Ingress 资源的状态显示为 nginx.itheima.com,并且它有一个分配的 IP 地址。

[root@k8s-master ingress]# kubectl get ing

NAME CLASS HOSTS ADDRESS PORTS AGE

wolfcode-nginx-ingress-https <none> nginx.itheima.com 10.102.1.91 80, 443 22s

#使用以下命令详细查看 Ingress

[root@k8s-master ingress]# kubectl describe ing wolfcode-nginx-ingress-https

----

TLS:tls-secret terminates nginx.itheima.com

Rules:Host Path Backends---- ---- --------nginx.itheima.com / nginx-service:80 (10.244.1.13:80)

----

#查看service

[root@k8s-master ingress]# kubectl get service nginx-service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-service ClusterIP 10.104.195.8 <none> 80/TCP 8d

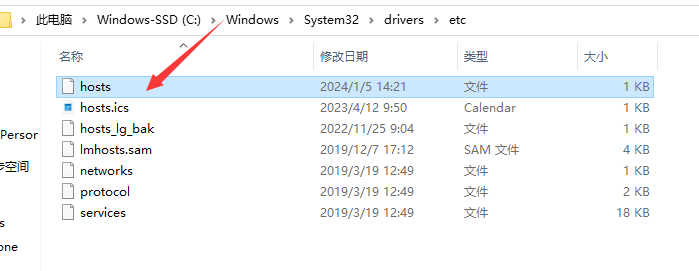

更新本地 Hosts 文件(可选):

将域名 nginx.itheima.com 映射到 Ingress 分配的 IP 地址。您可以编辑本地的 /etc/hosts 文件,添加一行类似于以下内容:

nginx.itheima.com

192.168.235.129 nginx.itheima.com

3.访问成功

通过浏览器访问:

- 打开浏览器,并在地址栏中输入 https://nginx.itheima.com