oracle 10G rac + asm

目标:完成cluster安装,升级cluster到10.2.4

完成数据库安装,使用raw存储crs和作为选举盘,使用asm作为数据库存储空间

升级database到10.2.4

完成实例安装,

网络地址规划:

| 192.168.1.171 rac1 rac1.oracle.com 192.168.1.172 rac1-vip 192.168.1.173 rac2 rac2.oracle.com 192.168.1.174 rac2-vip 172.168.1.191 rac1-priv 172.168.1.192 rac2-priv |

创建文件夹挂载光盘安装oracle需要的包

| [root@rac1 ~]# mkdir /media/disk [root@rac1 ~]# mount /dev/cdrom /media/disk mount: block device /dev/cdrom is write-protected, mounting read-only [root@rac1 yum.repos.d]# cat public-yum-el5.repo [oel5] name = Enterprise Linux 5.5 DVD baseurl = file:///media/disk/Server/ gpgcheck = 0 enabled = 1 yum -y install oracle-validated |

创建文件夹存放cluster database 以及升级文件添加hosts

| mkdir /s01 chown oracle:dba /s0 [root@rac1 ~]# passwd oracle Changing password for user oracle. New UNIX password: Retype new UNIX password: passwd: all authentication tokens updated successfully. [root@rac1 ~]# cat /etc/hosts # Do not remove the following line, or various programs # that require network functionality will fail. 127.0.0.1 localhost.localdomain localhost ::1 localhost6.localdomain6 localhost6 #add by Stark Shaw 192.168.1.171 rac1 rac1.oracle.com 192.168.1.172 rac1-vip 192.168.1.173 rac2 rac2.oracle.com 192.168.1.174 rac2-vip 172.168.1.191 rac1-priv 172.168.1.192 rac2-priv #end |

[

| rac2主机] updatedb locate bak rm -rf `locate bak` updatedb locate bak system-config-network-tui 172.168.1.192 rac2-priv eth1 192.168.1.173 rac2 rac2.oracle.com eth0 service network restart |

添加硬盘 固定大小 ocr 2G

三块磁盘 dbshare[1,2,3] 5G fixsize

管理--虚拟介质管理-创建的磁盘可以共享

在rac2添加已经存在新创建的磁盘[注意添加的sata 端口要和rac1一致]

rac1主机操作:

| [root@rac1 ~]# fdisk -l |grep bytes Disk /dev/sdb doesn't contain a valid partition table Disk /dev/sdc doesn't contain a valid partition table Disk /dev/sdd doesn't contain a valid partition table Disk /dev/sde doesn't contain a valid partition table Disk /dev/dm-0 doesn't contain a valid partition table Disk /dev/dm-1 doesn't contain a valid partition table Disk /dev/sda: 64.4 GB, 64424509440 bytes Units = cylinders of 16065 * 512 = 8225280 bytes Disk /dev/sdb: 2147 MB, 2147483648 bytes Units = cylinders of 16065 * 512 = 8225280 bytes Disk /dev/sdc: 5368 MB, 5368709120 bytes Units = cylinders of 16065 * 512 = 8225280 bytes Disk /dev/sdd: 5368 MB, 5368709120 bytes Units = cylinders of 16065 * 512 = 8225280 bytes Disk /dev/sde: 5368 MB, 5368709120 bytes Units = cylinders of 16065 * 512 = 8225280 bytes Disk /dev/dm-0: 62.1 GB, 62176362496 bytes Units = cylinders of 16065 * 512 = 8225280 bytes Disk /dev/dm-1: 2113 MB, 2113929216 bytes Units = cylinders of 16065 * 512 = 8225280 bytes |

为sdb分区

[

| root@rac1 ~]# fdisk /dev/sdb Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel Building a new DOS disklabel. Changes will remain in memory only, until you decide to write them. After that, of course, the previous content won't be recoverable. Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite) Command (m for help): n Command action e extended p primary partition (1-4) p Partition number (1-4): 1 First cylinder (1-261, default 1): Using default value 1 Last cylinder or +size or +sizeM or +sizeK (1-261, default 261): +1000M Command (m for help): n Command action e extended p primary partition (1-4) p Partition number (1-4): 2 First cylinder (124-261, default 124): Using default value 124 Last cylinder or +size or +sizeM or +sizeK (124-261, default 261): Using default value 261 Command (m for help): w The partition table has been altered! Calling ioctl() to re-read partition table. WARNING: Re-reading the partition table failed with error 16: Device or resource busy. The kernel still uses the old table. The new table will be used at the next reboot. Syncing disks. partprobe /dev/sdb [root@rac1 ~]# ls -l /dev/sdb* brw-r----- 1 root disk 8, 16 Jun 21 01:29 /dev/sdb brw-r----- 1 root disk 8, 17 Jun 21 01:30 /dev/sdb1 brw-r----- 1 root disk 8, 18 Jun 21 01:30 /dev/sdb2 |

| [root@rac1 ~]# cd /etc/udev/rules.d/ [root@rac1 rules.d]# vim 60-raw.rules # Enter raw device bindings here. # # An example would be: # ACTION=="add", KERNEL=="sda", RUN+="/bin/raw /dev/raw/raw1 %N" # to bind /dev/raw/raw1 to /dev/sda, or # ACTION=="add", ENV{MAJOR}=="8", ENV{MINOR}=="1", RUN+="/bin/raw /dev/raw/raw 2 %M %m" # to bind /dev/raw/raw2 to the device with major 8, minor 1. #add by stark ACTION=="add", KERNEL=="sdb1", RUN+="/bin/raw /dev/raw/raw1 %N" ACTION=="add", KERNEL=="sdb2", RUN+="/bin/raw /dev/raw/raw2 %N" ACTION=="add", KERNEL=="raw*", OWNER=="oracle", GROUP=="oinstall", MODE=="0660" "60-raw.rules" 11L, 538C written [root@rac1 rules.d]# start_udev Starting udev: [ OK ] [root@rac1 rules.d]# ls /dev/raw/* -l crw-rw---- 1 oracle oinstall 162, 1 Jun 21 01:38 /dev/raw/raw1 crw-rw---- 1 oracle oinstall 162, 2 Jun 21 01:38 /dev/raw/raw2 |

[root@rac1 rules.d]# touch 99-oracle-asmdevices.rules

执行

for i in c d e ;cde为当前存在的非系统磁盘数

do

echo "KERNEL==\"sd$i\", BUS==\"scsi\", PROGRAM==\"/sbin/scsi_id -g -u -s %p\", RESULT==\"`scsi_id -g -u -s /block/sd$i`\", NAME=\"asm-disk$i\", OWNER=\"oracle\", GROUP=\"oinstall\", MODE=\"0660\""

done

结果如下

| KERNEL=="sdc", BUS=="scsi", PROGRAM=="/sbin/scsi_id -g -u -s %p", RESULT=="SATA_VBOX_HARDDISK_VBda106253-7fed37ca_", NAME="asm-diskc", OWNER="oracle", GROUP="oinstall", MODE="0660" KERNEL=="sdd", BUS=="scsi", PROGRAM=="/sbin/scsi_id -g -u -s %p", RESULT=="SATA_VBOX_HARDDISK_VBa3720023-d5ef9f25_", NAME="asm-diskd", OWNER="oracle", GROUP="oinstall", MODE="0660" KERNEL=="sde", BUS=="scsi", PROGRAM=="/sbin/scsi_id -g -u -s %p", RESULT=="SATA_VBOX_HARDDISK_VBaaff0479-8f5486db_", NAME="asm-diske", OWNER="oracle", GROUP="oinstall", MODE="0660" |

重启查看asm绑定情况

| [root@rac1 rules.d]# start_udev Starting udev: [ OK ] [root@rac1 rules.d]# ll /dev/asm-disk* brw-rw---- 1 oracle oinstall 8, 32 Jun 21 01:51 /dev/asm-diskc brw-rw---- 1 oracle oinstall 8, 48 Jun 21 01:51 /dev/asm-diskd brw-rw---- 1 oracle oinstall 8, 64 Jun 21 01:51 /dev/asm-diske |

| [root@rac1 rules.d]# scp 60-raw.rules 99-oracle-asmdevices.rules rac2:`pwd` The authenticity of host 'rac2 (192.168.1.173)' can't be established. RSA key fingerprint is fa:dd:a6:17:c0:a8:9b:f9:a8:82:ae:8c:4b:d2:90:44. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'rac2,192.168.1.173' (RSA) to the list of known hosts. root@rac2's password: 60-raw.rules 100% 538 0.5KB/s 00:00 99-oracle-asmdevices.rules |

[root@rac2 rules.d]# start_udev

Starting udev: [ OK ]

[root@rac2 rules.d]# ll /dev/raw/raw*

crw-rw---- 1 oracle oinstall 162, 1 Jun 21 02:00 /dev/raw/raw1

crw-rw---- 1 oracle oinstall 162, 2 Jun 21 02:00 /dev/raw/raw2

[root@rac2 ~]# ll /dev/asm-disk*

brw-rw---- 1 oracle oinstall 8, 32 Jun 21 02:30 /dev/asm-diskc

brw-rw---- 1 oracle oinstall 8, 48 Jun 21 02:30 /dev/asm-diskd

brw-rw---- 1 oracle oinstall 8, 64 Jun 21 02:30 /dev/asm-diske

ssh免密码登录

[

| root@rac1 rules.d]# su - oracle [oracle@rac1 ~]$ ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/home/oracle/.ssh/id_rsa): Created directory '/home/oracle/.ssh'. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/oracle/.ssh/id_rsa. Your public key has been saved in /home/oracle/.ssh/id_rsa.pub. The key fingerprint is: 6f:56:64:60:0a:55:27:93:18:7f:01:bc:13:8c:f6:0b oracle@rac1.oracle.com [oracle@rac1 ~]$ ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/home/oracle/.ssh/id_dsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/oracle/.ssh/id_dsa. Your public key has been saved in /home/oracle/.ssh/id_dsa.pub. The key fingerprint is: 94:fb:96:68:5c:d6:6a:3c:c3:a1:b4:6d:59:50:bb:cd oracle@rac1.oracle.com [oracle@rac1 ~]$ cd ~/.ssh/ [oracle@rac1 ~]$ cd ~/.ssh/ [oracle@rac1 .ssh]$ ls id_dsa id_dsa.pub id_rsa id_rsa.pub [oracle@rac1 .ssh]$ cat id_rsa.pub > authorized_keys [oracle@rac1 .ssh]$ cat id_dsa.pub >> authorized_keys [oracle@rac1 .ssh]$ cat authorized_keys ssh-rsa AAAAB3NzaC1yc2EAAAABIwAAAQEAsUUZyWS3YZaB/PEJJzzc9KwIqrvLh+lquTvZMMju4EC3zyvPd56lHl/2hpOOECBDGmLOtH/gGGbDDuj4uFzJ9JXbWFbve9etIBVbJuqz7LNis4yZjBDxtWgUrukmU8T7XvNVnEAxIkwX6C3UtHeQOl/XT1LJA9CHuwKpHNGxg5VCUSnYA3fqiisAqnV7nubSAueip3fCFd2VppiBqz5lywos1pSIN/KIojvVUwVyj9MR0MjwpXKzA0NCHRDreqLB7orRoKh3lN98NNAfKcnZ+p97244sZbNxPbpOg2CvVcUynixpTWLF4Asb8GHyMvM4mfq9D4gVleVM/GEKFwA8QQ== oracle@rac1.oracle.com ssh-dss AAAAB3NzaC1kc3MAAACBAKkQaIy7bNRhsSZ5V/tbM7xiivgyyNM2GVHyGoP5n8CCSnJT9Cnz7PpDgwZEIdSGBQuzkH06yRq4xq00zDi+hTgGcexc/TwRh2Bf5RtQ22bTl9TXt8dIEz7OWephJKdUrzRbbDhYX8L01VmVqiGnoPJwyUyfSonGConUcd0YPM8XAAAAFQCKb3TOxXhWEUitICgZq+ShkM2nVQAAAIA7akPevgGP42Y3UaWepXeaL7fDcvEqSSfvttUJOqchKGWK1KI6hX0Tsfy7+AUjCmbTszdCNa3VWU99yMzI/p7jtjLci81BybKfODCqqCLKk4g8ZM79xS9qvzacqSMkI19wbF6pl3V4ZuOYlYt94mk5WWqrUiK4lUvByA/YOSpXAgAAAIBTm9xDhJW3NSQVLmNCx67wvq4oku7xe11xJzfAcbuxiQGSj6c6tNi5rCKLtKpQQ6vMLLE9IZG5L84I8p3QymkGa+QOtz/Q5VuuJXUcfivUfybDxrCbr7vLPQRoVQt0pLIFdKtLzcaub5P8dBxlXoiOHiKXYQqo+f1j7aeqheVZ2g== oracle@rac1.oracle.com 在rac2上生成 rsa dsa [oracle@rac2 ~]$ ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/home/oracle/.ssh/id_rsa): Created directory '/home/oracle/.ssh'. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/oracle/.ssh/id_rsa. Your public key has been saved in /home/oracle/.ssh/id_rsa.pub. The key fingerprint is: 91:41:e5:6f:b7:d1:ed:3e:03:d3:8f:7d:d0:34:58:64 oracle@rac2.oracle.com [oracle@rac2 ~]$ ssh-keygen -t dsa Generating public/private dsa key pair. Enter file in which to save the key (/home/oracle/.ssh/id_dsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/oracle/.ssh/id_dsa. Your public key has been saved in /home/oracle/.ssh/id_dsa.pub. The key fingerprint is: 61:0c:4f:1f:24:de:1a:af:a3:ea:b6:d8:73:7b:fe:74 oracle@rac2.oracle.com 拷贝rac2 上oracle的密钥到rac1的自认证文件 [oracle@rac1 ~]$ cd .ssh/ [oracle@rac1 .ssh]$ ssh rac2 cat ~/.ssh/id_rsa.pub >> authorized_keys The authenticity of host 'rac2 (192.168.1.173)' can't be established. RSA key fingerprint is fa:dd:a6:17:c0:a8:9b:f9:a8:82:ae:8c:4b:d2:90:44. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'rac2,192.168.1.173' (RSA) to the list of known hosts. oracle@rac2's password: [oracle@rac1 .ssh]$ ssh rac2 cat ~/.ssh/id_dsa.pub >> authorized_keys oracle@rac2's password: 拷贝自认证文件到rac2 [oracle@rac1 .ssh]$ scp authorized_keys rac2:`pwd` oracle@rac2's password: authorized_keys 验证是否通过 rac1 到rac2' [oracle@rac1 .ssh]$ ssh rac2 [oracle@rac2 ~]$ logout Connection to rac2 closed. rac2 到 rac1 [oracle@rac2 ~]$ ssh rac1 The authenticity of host 'rac1 (192.168.1.171)' can't be established. RSA key fingerprint is fa:dd:a6:17:c0:a8:9b:f9:a8:82:ae:8c:4b:d2:90:44. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'rac1,192.168.1.171' (RSA) to the list of known hosts. [oracle@rac1 ~]$ logout Connection to rac1 closed. |

| rac1操作 [oracle@rac1 .ssh]$ date;ssh rac1 date Thu Jun 21 02:22:04 CST 2012 The authenticity of host 'rac1 (192.168.1.171)' can't be established. RSA key fingerprint is fa:dd:a6:17:c0:a8:9b:f9:a8:82:ae:8c:4b:d2:90:44. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'rac1,192.168.1.171' (RSA) to the list of known hosts. Thu Jun 21 02:22:06 CST 2012 rac2操作 [oracle@rac2 .ssh]$ date;ssh rac1 date Thu Jun 21 02:22:20 CST 2012 Thu Jun 21 02:22:20 CST 2012 [oracle@rac2 .ssh]$ date;ssh rac2 date Thu Jun 21 02:22:25 CST 2012 The authenticity of host 'rac2 (192.168.1.173)' can't be established. RSA key fingerprint is fa:dd:a6:17:c0:a8:9b:f9:a8:82:ae:8c:4b:d2:90:44. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'rac2,192.168.1.173' (RSA) to the list of known hosts. Thu Jun 21 02:22:26 CST 2012 |

上传cluster database等到rac1的/s01目录

[root@rac1 s01]# ll

total 2131768

-rw-r--r-- 1 oracle oinstall 316486815 Jun 21 02:38 10201_clusterware_linux_x86_64.gz

-rw-r--r-- 1 oracle oinstall 668734007 Jun 21 02:39 10201_database_linux32.zip

-rw-r--r-- 1 oracle oinstall 1195551830 Jun 21 02:40 p6810189_10204_Linux-x86-64.zip

解压缩

[oracle@rac1 s01]$ gzip -d 10201_clusterware_linux_x86_64.gz

[oracle@rac1 s01]$ ll

total 2143268

-rw-r--r-- 1 oracle oinstall 328253440 Jun 21 02:38 10201_clusterware_linux_x86_64

-rw-r--r-- 1 oracle oinstall 668734007 Jun 21 02:39 10201_database_linux32.zip

-rw-r--r-- 1 oracle oinstall 1195551830 Jun 21 02:40 p6810189_10204_Linux-x86-64.zip

[oracle@rac1 s01]$ file 10201_clusterware_linux_x86_64

10201_clusterware_linux_x86_64: ASCII cpio archive (SVR4 with no CRC)

[oracle@rac1 s01]$ cpio -idvm < 10201_clusterware_linux_x86_64

宿主机器开启Xmanager - Passive

以oracle登录rac1

[oracle@rac1 ~]$ w

02:34:55 up 5 min, 2 users, load average: 0.04, 0.31, 0.17

USER TTY FROM LOGIN@ IDLE JCPU PCPU WHAT

root pts/0 192.168.1.86 02:32 2:33 0.03s 0.03s -bash

oracle pts/2 192.168.1.86 02:33 0.00s 0.02s 0.01s w

[oracle@rac1 ~]$ export DISPLAY=192.168.1.86:0.0

root运行一个脚本

[root@rac1 ~]# /s01/clusterware/rootpre/rootpre.sh

No OraCM running

修改oel发行版本如果不修改需要在runinstaller 后面添加./runInstaller -ignoreSysPrereqs

[rac1]

[root@rac1 ~]# cat /etc/redhat-release

Red Hat Enterprise Linux Server release 4.8 (Tikanga)

[root@rac1 ~]#

[rac2]

[root@rac1 ~]# cat /etc/redhat-release

Red Hat Enterprise Linux Server release 4.8 (Tikanga)

oracle用户执行

[oracle@rac1 clusterware]$ pwd

/s01/clusterware

[oracle@rac1 clusterware]$ ./runInstaller

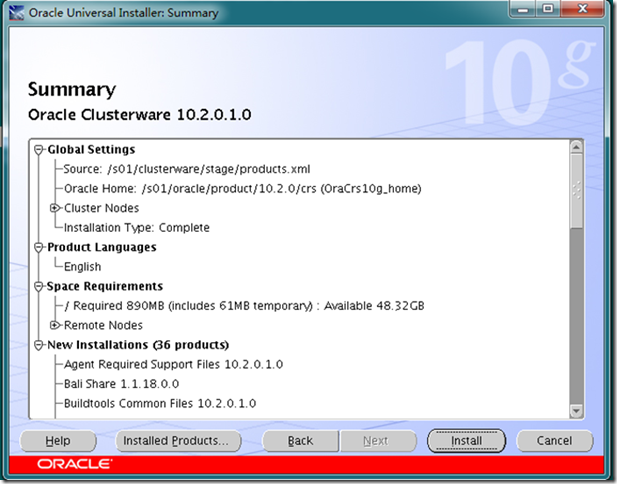

oracle inventory目录为

/s01/oracle/oraInventory

crs目录为

/s01/oracle/product/10.2.0/crs

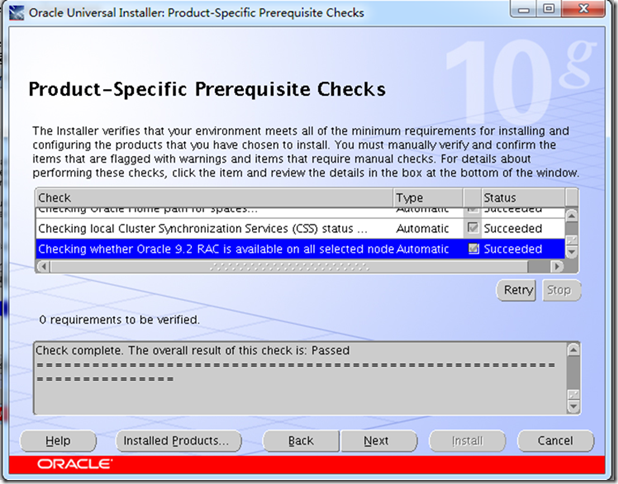

检测软件要求

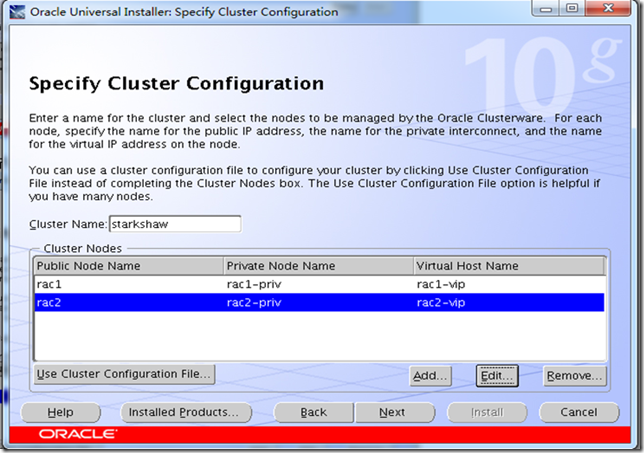

指定安装节点

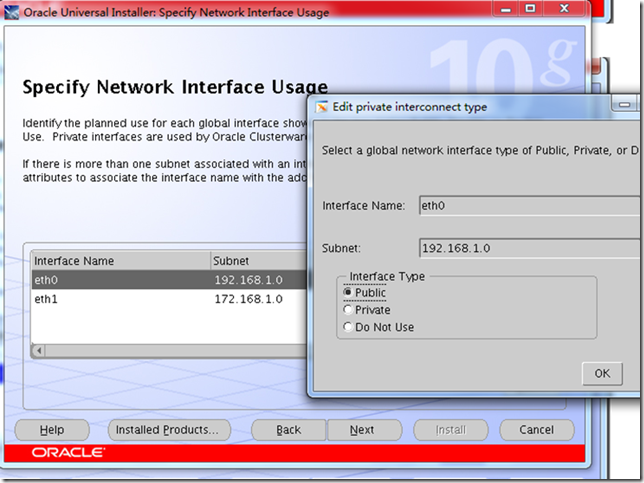

修改公私有网卡接口

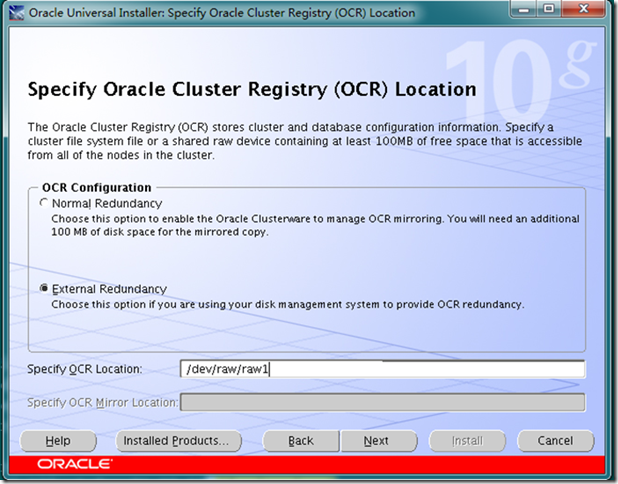

填入裸设备地址

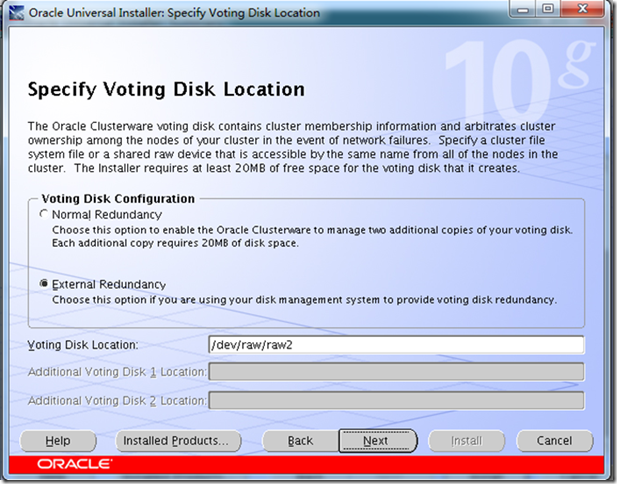

指定选举盘位置[生产环境建议用3个]

汇总

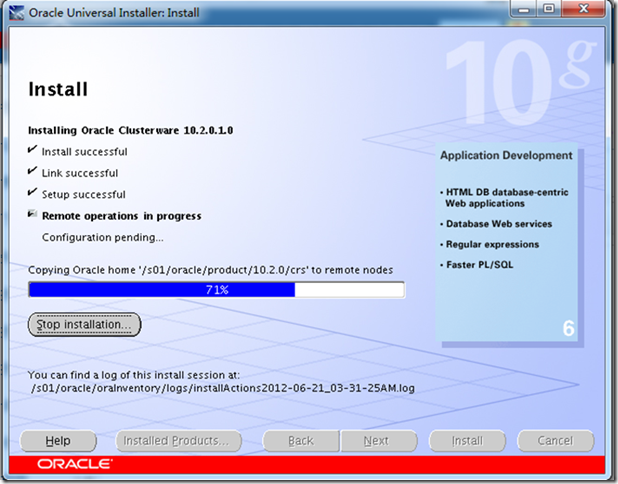

安装传输到rac2节点

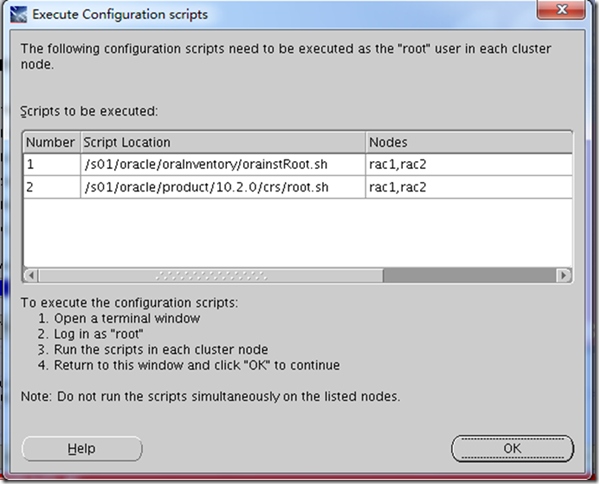

rac1和rac2上root用户执行脚本

[

| root@rac1 ~]# /s01/oracle/oraInventory/orainstRoot.sh Changing permissions of /s01/oracle/oraInventory to 770. Changing groupname of /s01/oracle/oraInventory to oinstall. The execution of the script is complete [root@rac1 ~]# [root@rac1 ~]# /s01/oracle/product/10.2.0/crs/root.sh WARNING: directory '/s01/oracle/product/10.2.0' is not owned by root WARNING: directory '/s01/oracle/product' is not owned by root WARNING: directory '/s01/oracle' is not owned by root WARNING: directory '/s01' is not owned by root Checking to see if Oracle CRS stack is already configured /etc/oracle does not exist. Creating it now. Setting the permissions on OCR backup directory Setting up NS directories Oracle Cluster Registry configuration upgraded successfully WARNING: directory '/s01/oracle/product/10.2.0' is not owned by root WARNING: directory '/s01/oracle/product' is not owned by root WARNING: directory '/s01/oracle' is not owned by root WARNING: directory '/s01' is not owned by root Successfully accumulated necessary OCR keys. Using ports: CSS=49895 CRS=49896 EVMC=49898 and EVMR=49897. node <nodenumber>: <nodename> <private interconnect name> <hostname> node 1: rac1 rac1-priv rac1 node 2: rac2 rac2-priv rac2 Creating OCR keys for user 'root', privgrp 'root'.. Operation successful. Now formatting voting device: /dev/raw/raw2 Format of 1 voting devices complete. Startup will be queued to init within 90 seconds. Adding daemons to inittab Expecting the CRS daemons to be up within 600 seconds. CSS is active on these nodes. rac1 CSS is inactive on these nodes. rac2 Local node checking complete. Run root.sh on remaining nodes to start CRS daemons. |

[oracle@rac1 bin]$ cat ~/.bash_profile |grep -v "#"

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

PATH=$PATH:$HOME/bin:/s01/oracle/product/10.2.0/crs/bin:.

export PATH

在rac2上执行以上操作

检查crs状态

[oracle@rac2 ~]$ crsctl check crs

CSS appears healthy

CRS appears healthy

EVM appears healthy

[oracle@rac1 ~]$ crsctl check crs

CSS appears healthy

CRS appears healthy

EVM appears healthy

升级crs

[oracle@rac1 10204]$ pwd

/s01/10204

[oracle@rac1 10204]$ unzip ../p6810189_10204_Linux-x86-64.zip

[oracle@rac1 10204]$ cd Disk1/

选择crs安装路径(默认路径不正确)

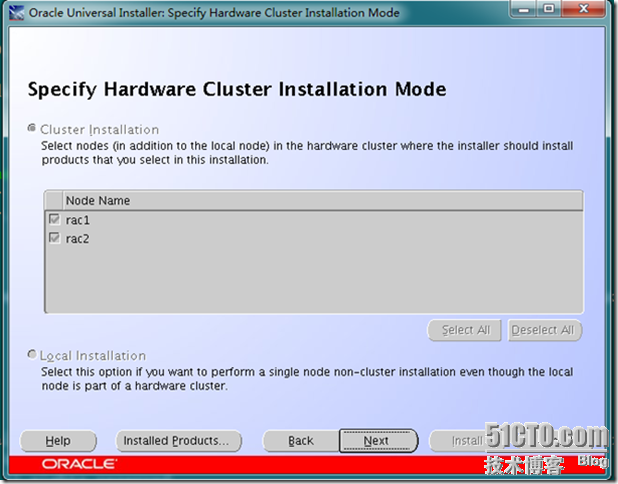

指定物理节点

汇总

升级完毕

停止crs运行脚本[这一步切记不能省略,否则patch没有打上]

[root@rac1 ~]# /s01/oracle/product/10.2.0/crs/bin/crsctl stop crs

Stopping resources.

Successfully stopped CRS resources

Stopping CSSD.

Shutting down CSS daemon.

Shutdown request successfully issued.

[root@rac1 ~]# /s01/oracle/product/10.2.0/crs/install/root102.sh

Creating pre-patch directory for saving pre-patch clusterware files

Completed patching clusterware files to /s01/oracle/product/10.2.0/crs

Relinking some shared libraries.

Relinking of patched files is complete.

WARNING: directory '/s01/oracle/product/10.2.0' is not owned by root

WARNING: directory '/s01/oracle/product' is not owned by root

WARNING: directory '/s01/oracle' is not owned by root

WARNING: directory '/s01' is not owned by root

Preparing to recopy patched init and RC scripts.

Recopying init and RC scripts.

Startup will be queued to init within 30 seconds.

Starting up the CRS daemons.

Waiting for the patched CRS daemons to start.

This may take a while on some systems.

.

10204 patch successfully applied.

clscfg: EXISTING configuration version 3 detected.

clscfg: version 3 is 10G Release 2.

Successfully accumulated necessary OCR keys.

Using ports: CSS=49895 CRS=49896 EVMC=49898 and EVMR=49897.

node <nodenumber>: <nodename> <private interconnect name> <hostname>

node 1: rac1 rac1-priv rac1

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

clscfg -upgrade completed successfully

检查crs版本

[oracle@rac1 ~]$ crsctl query crs activeversion

CRS active version on the cluster is [10.2.0.4.0]

[oracle@rac2 ~]$ crsctl query crs activeversion

CRS active version on the cluster is [10.2.0.4.0]

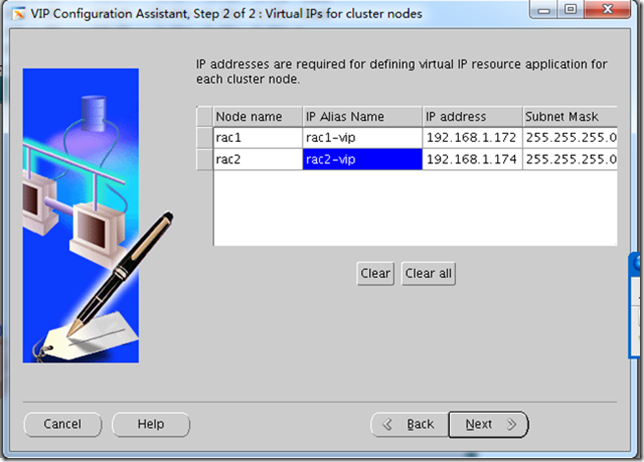

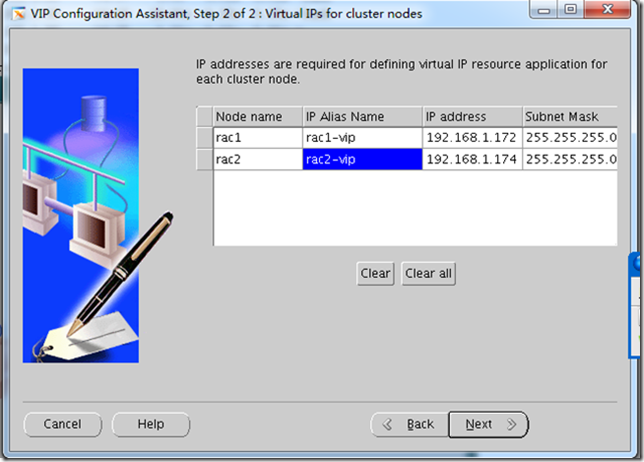

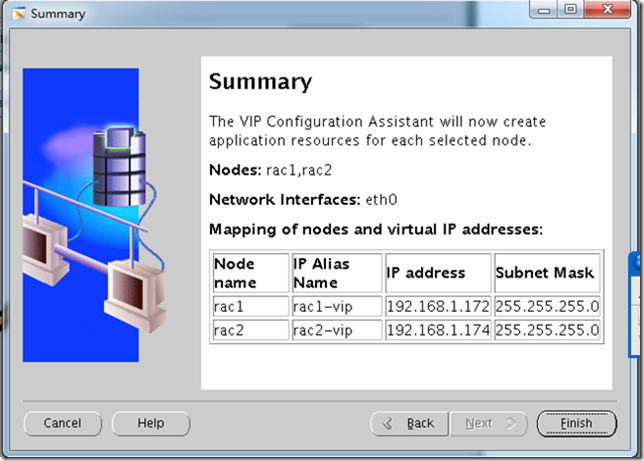

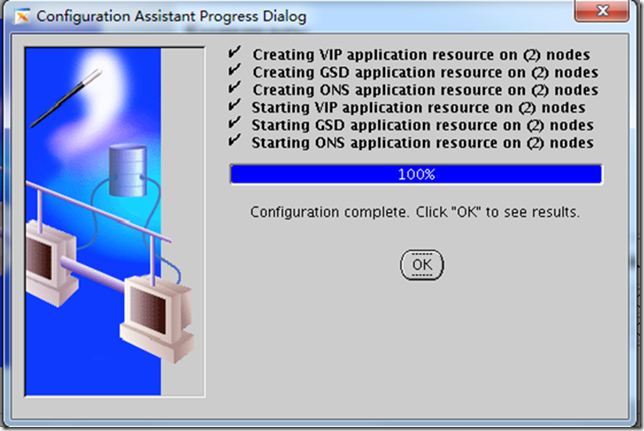

创建VIP GSD服务

在RAC1上运行vipca命令[需要xwindow]

[root@rac1 ~]# w

04:45:08 up 1:17, 3 users, load average: 0.03, 0.07, 0.23

USER TTY FROM LOGIN@ IDLE JCPU PCPU WHAT

oracle pts/1 192.168.1.86 04:37 1:55 0.02s 0.02s -bash

oracle pts/2 192.168.1.86 04:19 19.00s 0.15s 0.15s -bash

root pts/3 192.168.1.86 04:25 0.00s 0.03s 0.00s w

[root@rac1 ~]# export DISPLAY=192.168.1.86:0.0

[root@rac1 ~]# /s01/oracle/product/10.2.0/crs/bin/vipca

现在虚拟IP应该起来了,

看一下

[root@rac1 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 08:00:27:35:86:84 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.171/24 brd 192.168.1.255 scope global eth0

inet 192.168.1.172/24 brd 192.168.1.255 scope global secondary eth0:1

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 08:00:27:6b:9f:ea brd ff:ff:ff:ff:ff:ff

inet 172.168.1.191/24 brd 172.168.1.255 scope global eth1

[root@rac2 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 08:00:27:06:76:9a brd ff:ff:ff:ff:ff:ff

inet 192.168.1.173/24 brd 192.168.1.255 scope global eth0

inet 192.168.1.174/24 brd 192.168.1.255 scope global secondary eth0:1

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 08:00:27:ed:8d:cf brd ff:ff:ff:ff:ff:ff

inet 172.168.1.192/24 brd 172.168.1.255 scope global eth1

至此crs安装升级完毕

下面安装数据库10.2.1和升级数据库到10.2.4

gzip -d ../10201_database_linux_x86_64.cpio.gz

cpio -idvm < /10201_database_linux_x86_64.cpio

解压之后执行安装程序,

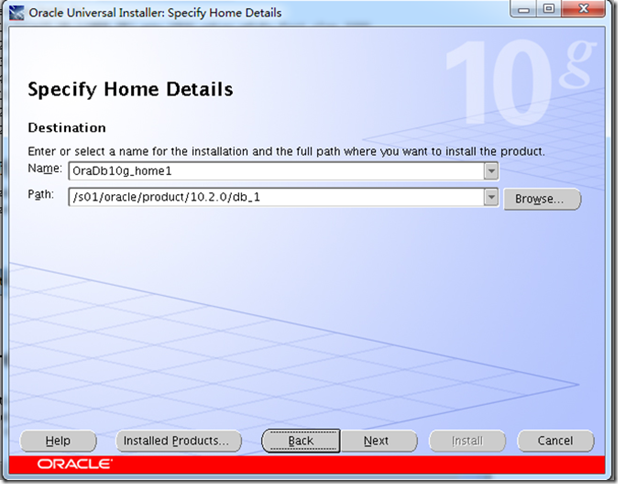

指定dbhome

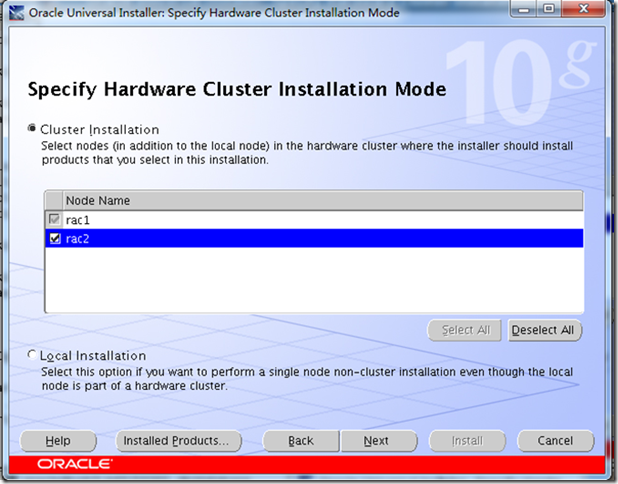

选择安装节点

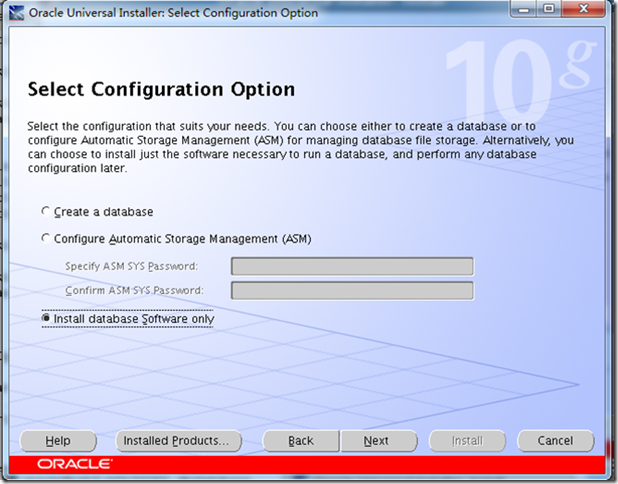

安装数据库软件

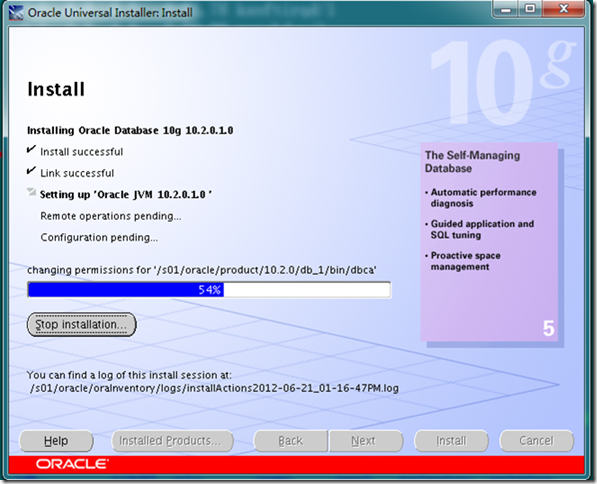

RAC1 和RAC2上都运行

[root@rac1 ~]# /s01/oracle/product/10.2.0/db_1/root.sh

Running Oracle10 root.sh script...

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /s01/oracle/product/10.2.0/db_1

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root.sh script.

Now product-specific root actions will be performed.

写入配置文件

[oracle@rac1 Disk1]$ cat ~/.bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin:/s01/oracle/product/10.2.0/crs/bin:.

export PATH

ORA_CRS_HOME=/s01/oracle/product/10.2.0/crs

export ORA_CRS_HOME

export ORACLE_HOME=/s01/oracle/product/10.2.0/db_1

export ORACLE_SID=stark2[rac2上]

export ORACLE_SID=stark1[rac1上]

export PATH=$PATH:$ORACLE_HOME/bin:.

安装数据库补丁

Runinstaller

确定安装路径oracle_home

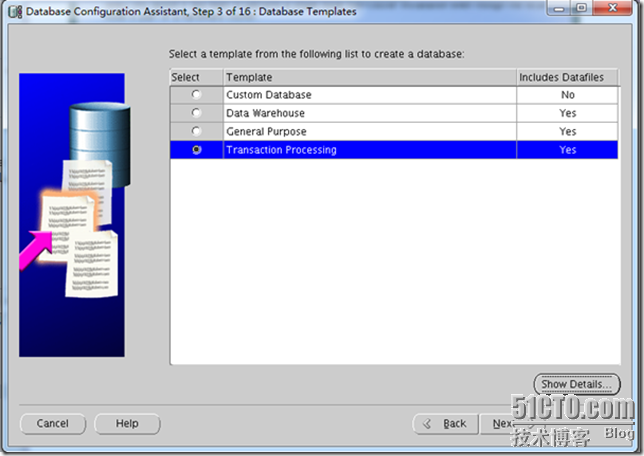

安装数据库选型

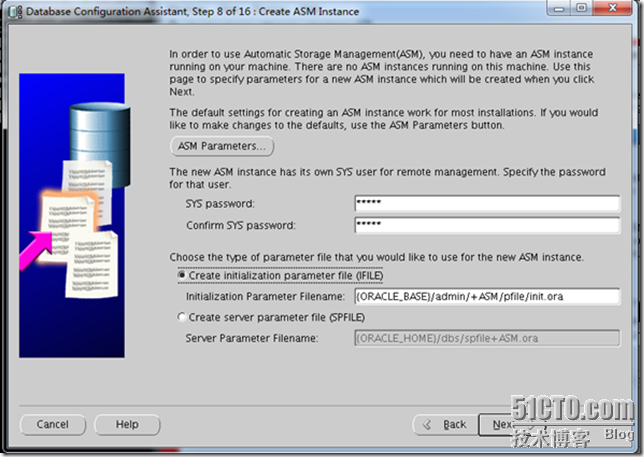

使用asm存储创建asm密码

会提示1521不存在是否创建

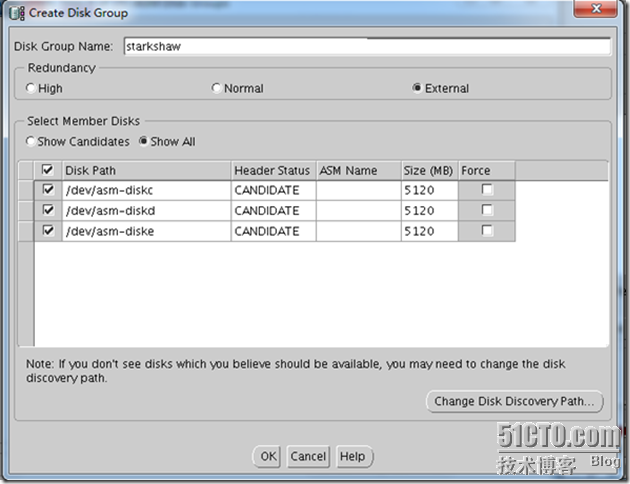

使用创建的asm,不一定能全部找到,选择change disk discovey path指定络设备路径

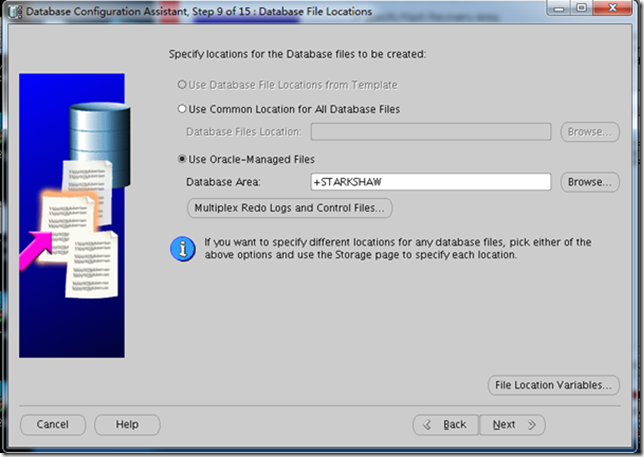

指定数据存储区域

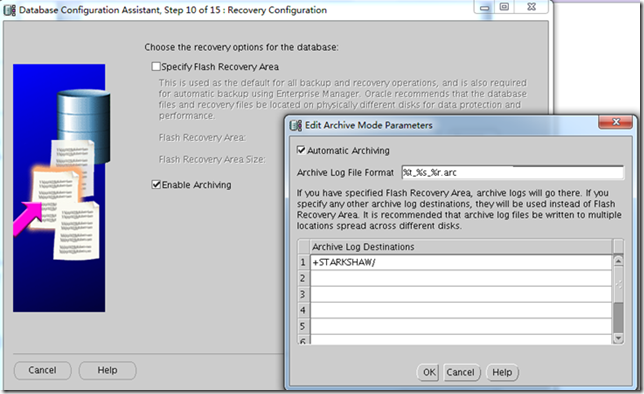

修改归档日志格式

选择sample schgemas

跳过管理service 管理

Process 300

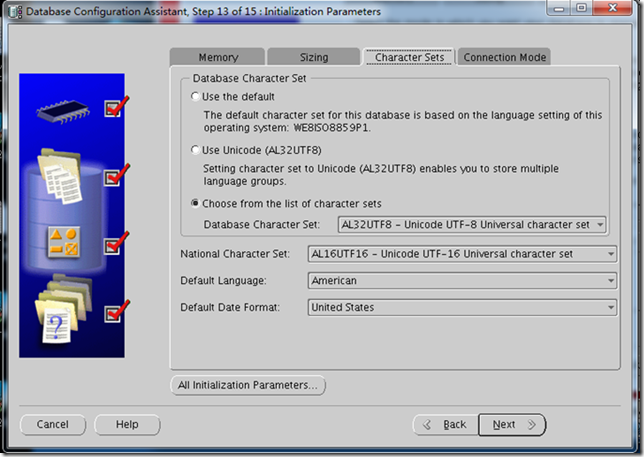

字符集使用推荐的uft32-8

连接模式为独享

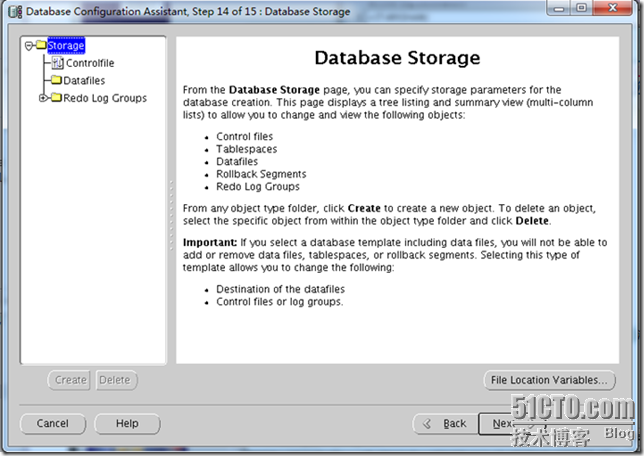

汇总

创建数据库完成并且已经启动实例

然后执行一下命令查看是否都起来了.

| [oracle@rac1 onlinelog]$ crs_stat -t -v shell-init: error retrieving current directory: getcwd: cannot access parent directories: No such file or directory Name Type R/RA F/FT Target State Host ---------------------------------------------------------------------- ora....SM1.asm application 0/5 0/0 ONLINE ONLINE rac1 ora....C1.lsnr application 0/5 0/0 ONLINE ONLINE rac1 ora.rac1.gsd application 0/5 0/0 ONLINE ONLINE rac1 ora.rac1.ons application 0/3 0/0 ONLINE ONLINE rac1 ora.rac1.vip application 0/0 0/0 ONLINE ONLINE rac1 ora....SM2.asm application 0/5 0/0 ONLINE ONLINE rac2 ora....C2.lsnr application 0/5 0/0 ONLINE ONLINE rac2 ora.rac2.gsd application 0/5 0/0 ONLINE ONLINE rac2 ora.rac2.ons application 0/3 0/0 ONLINE ONLINE rac2 ora.rac2.vip application 0/0 0/0 ONLINE ONLINE rac2 ora.stark.db application 0/0 0/1 ONLINE ONLINE rac1 ora....k1.inst application 0/5 0/0 ONLINE ONLINE rac1 ora....k2.inst application 0/5 0/0 ONLINE ONLINE rac2 测试rac [oracle@rac1 ~]$ sqlplus /'as sysdba' SQL*Plus: Release 10.2.0.4.0 - Production on Thu Jun 21 20:07:08 2012 Copyright (c) 1982, 2007, Oracle. All Rights Reserved. Connected to: Oracle Database 10g Enterprise Edition Release 10.2.0.4.0 - 64bit Production With the Partitioning, Real Application Clusters, OLAP, Data Mining and Real Application Testing options SQL> shutdown immediate; Database closed. Database dismounted. ORACLE instance shut down. SQL> [oracle@rac2 ~]$ crs_stat -t -v Name Type R/RA F/FT Target State Host ---------------------------------------------------------------------- ora....SM1.asm application 0/5 0/0 ONLINE ONLINE rac1 ora....C1.lsnr application 0/5 0/0 ONLINE ONLINE rac1 ora.rac1.gsd application 0/5 0/0 ONLINE ONLINE rac1 ora.rac1.ons application 0/3 0/0 ONLINE ONLINE rac1 ora.rac1.vip application 0/0 0/0 ONLINE ONLINE rac1 ora....SM2.asm application 0/5 0/0 ONLINE ONLINE rac2 ora....C2.lsnr application 0/5 0/0 ONLINE ONLINE rac2 ora.rac2.gsd application 0/5 0/0 ONLINE ONLINE rac2 ora.rac2.ons application 0/3 0/0 ONLINE ONLINE rac2 ora.rac2.vip application 0/0 0/0 ONLINE ONLINE rac2 ora.stark.db application 0/0 0/1 ONLINE ONLINE rac1 ora....k1.inst application 0/5 0/0 OFFLINE OFFLINE ora....k2.inst application 0/5 0/0 ONLINE ONLINE rac2 |

转载于:https://blog.51cto.com/gavinshaw/906119