卷积神经网络(Inception V3)识别手语

文章目录

- 一、前言

- 二、前期工作

- 1. 设置GPU(如果使用的是CPU可以忽略这步)

- 2. 导入数据

- 3. 查看数据

- 二、数据预处理

- 1. 加载数据

- 2. 可视化数据

- 3. 再次检查数据

- 4. 配置数据集

- 三、构建Inception V3网络模型

- 1.自己搭建

- 2.官方模型

- 五、编译

- 六、训练模型

- 七、模型评估

- 二、构建一个tf.data.Dataset

- 1.预处理函数

- 七、保存和加载模型

- 八、预测

一、前言

我的环境:

- 语言环境:Python3.6.5

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2.4.1

往期精彩内容:

- 卷积神经网络(CNN)实现mnist手写数字识别

- 卷积神经网络(CNN)多种图片分类的实现

- 卷积神经网络(CNN)衣服图像分类的实现

- 卷积神经网络(CNN)鲜花识别

- 卷积神经网络(CNN)天气识别

- 卷积神经网络(VGG-16)识别海贼王草帽一伙

- 卷积神经网络(ResNet-50)鸟类识别

- 卷积神经网络(AlexNet)鸟类识别

- 卷积神经网络(CNN)识别验证码

来自专栏:机器学习与深度学习算法推荐

二、前期工作

1. 设置GPU(如果使用的是CPU可以忽略这步)

import tensorflow as tfgpus = tf.config.list_physical_devices("GPU")if gpus:tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用tf.config.set_visible_devices([gpus[0]],"GPU")

2. 导入数据

import matplotlib.pyplot as plt

# 支持中文

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号import os,PIL,pathlib# 设置随机种子尽可能使结果可以重现

import numpy as np

np.random.seed(1)# 设置随机种子尽可能使结果可以重现

import tensorflow as tf

tf.random.set_seed(1)from tensorflow import keras

from tensorflow.keras import layers,models

data_dir = "code"

data_dir = pathlib.Path(data_dir)all_image_paths = list(data_dir.glob('*'))

all_image_paths = [str(path) for path in all_image_paths]# 打乱数据

random.shuffle(all_image_paths)# 获取数据标签

all_label_names = [path.split("\\")[5].split(".")[0] for path in all_image_paths]image_count = len(all_image_paths)

print("图片总数为:",image_count)

data_dir = "gestures"data_dir = pathlib.Path(data_dir)

3. 查看数据

image_count = len(list(data_dir.glob('*/*')))print("图片总数为:",image_count)

图片总数为: 12547

二、数据预处理

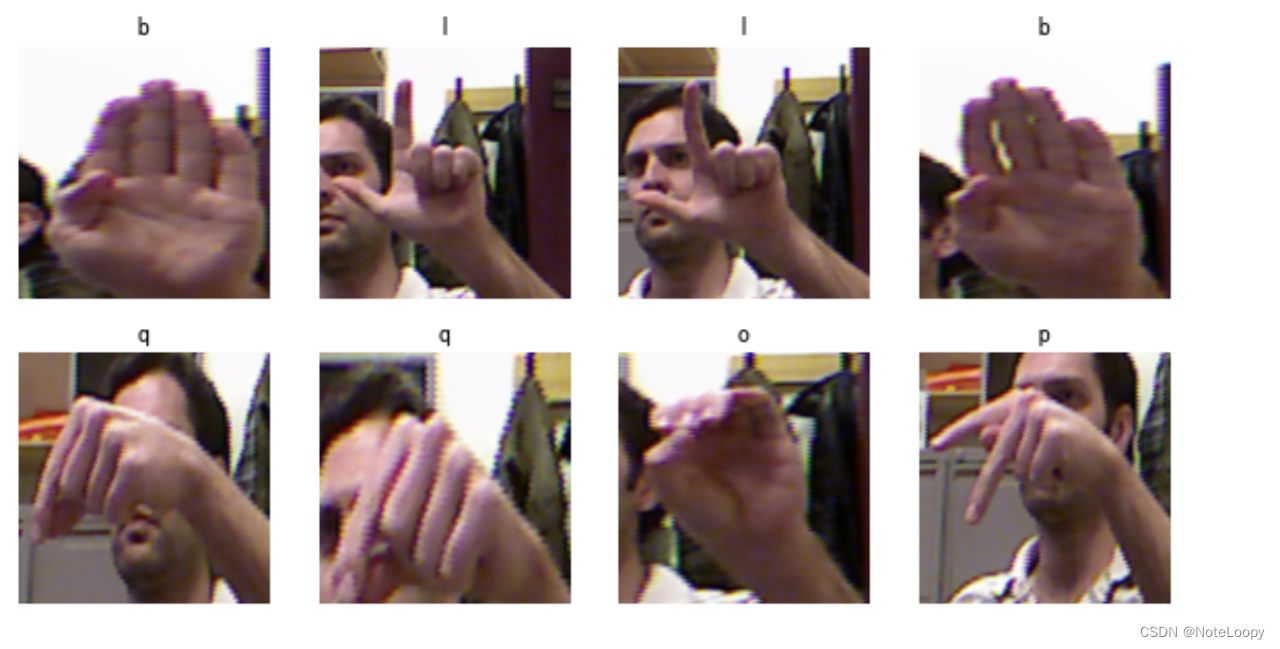

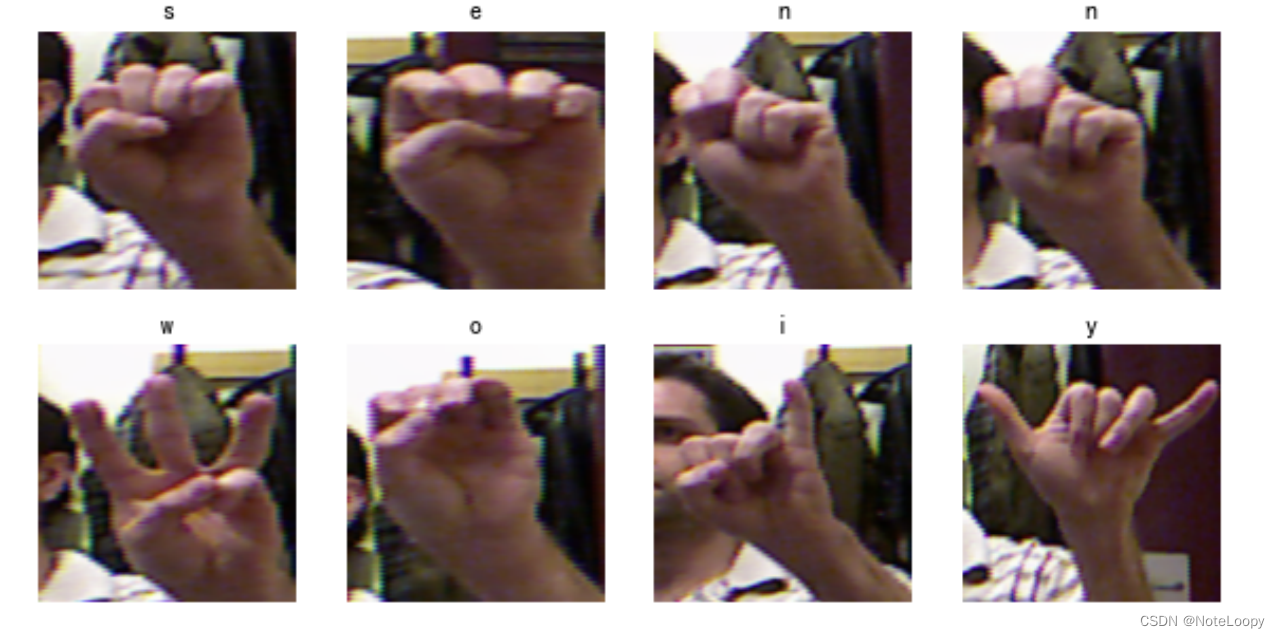

本文主要是识别24个英文字母的手语姿势(另外两个字母的手语是动作),其中每一个手语姿势图片均有500+张。

1. 加载数据

使用image_dataset_from_directory方法将磁盘中的数据加载到tf.data.Dataset中

batch_size = 8

img_height = 224

img_width = 224

TensorFlow版本是2.2.0的同学可能会遇到module 'tensorflow.keras.preprocessing' has no attribute 'image_dataset_from_directory'的报错,升级一下TensorFlow就OK了。

train_ds = tf.keras.preprocessing.image_dataset_from_directory(data_dir,validation_split=0.2,subset="training",seed=123,image_size=(img_height, img_width),batch_size=batch_size)

Found 12547 files belonging to 24 classes.

Using 10038 files for training.

val_ds = tf.keras.preprocessing.image_dataset_from_directory(data_dir,validation_split=0.2,subset="validation",seed=123,image_size=(img_height, img_width),batch_size=batch_size)

class_names = train_ds.class_names

print(class_names)

['a', 'b', 'c', 'd', 'e', 'f', 'g', 'h', 'i', 'k', 'l', 'm', 'n', 'o', 'p', 'q', 'r', 's', 't', 'u', 'v', 'w', 'x', 'y']

2. 可视化数据

plt.figure(figsize=(10, 5)) # 图形的宽为10高为5for images, labels in train_ds.take(1):for i in range(8):ax = plt.subplot(2, 4, i + 1) plt.imshow(images[i].numpy().astype("uint8"))plt.title(class_names[labels[i]])plt.axis("off")

plt.imshow(images[1].numpy().astype("uint8"))

3. 再次检查数据

for image_batch, labels_batch in train_ds:print(image_batch.shape)print(labels_batch.shape)break

(8, 224, 224, 3)

(8,)

Image_batch是形状的张量(8, 224, 224, 3)。这是一批形状240x240x3的8张图片(最后一维指的是彩色通道RGB)。Label_batch是形状(8,)的张量,这些标签对应8张图片

4. 配置数据集

AUTOTUNE = tf.data.AUTOTUNEtrain_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

三、构建Inception V3网络模型

1.自己搭建

下面是本文的重点 Inception V3 网络模型的构建,可以试着按照上面的图自己构建一下 Inception V3,这部分我主要是参考官网的构建过程,将其单独拎了出来。

#=============================================================

# Inception V3 网络

#=============================================================from tensorflow.keras.models import Model

from tensorflow.keras import layers

from tensorflow.keras.layers import Activation,Dense,Input,BatchNormalization,Conv2D,AveragePooling2D

from tensorflow.keras.layers import GlobalAveragePooling2D,MaxPooling2Ddef conv2d_bn(x,filters,num_row,num_col,padding='same',strides=(1, 1),name=None):if name is not None:bn_name = name + '_bn'conv_name = name + '_conv'else:bn_name = Noneconv_name = Nonex = Conv2D(filters,(num_row, num_col),strides=strides,padding=padding,use_bias=False,name=conv_name)(x)x = BatchNormalization(scale=False, name=bn_name)(x)x = Activation('relu', name=name)(x)return xdef InceptionV3(input_shape=[224,224,3],classes=1000):img_input = Input(shape=input_shape)x = conv2d_bn(img_input, 32, 3, 3, strides=(2, 2), padding='valid')x = conv2d_bn(x, 32, 3, 3, padding='valid')x = conv2d_bn(x, 64, 3, 3)x = MaxPooling2D((3, 3), strides=(2, 2))(x)x = conv2d_bn(x, 80, 1, 1, padding='valid')x = conv2d_bn(x, 192, 3, 3, padding='valid')x = MaxPooling2D((3, 3), strides=(2, 2))(x)#================================## Block1 35x35#================================## Block1 part1# 35 x 35 x 192 -> 35 x 35 x 256branch1x1 = conv2d_bn(x, 64, 1, 1)branch5x5 = conv2d_bn(x, 48, 1, 1)branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 32, 1, 1)x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool],axis=3,name='mixed0')# Block1 part2# 35 x 35 x 256 -> 35 x 35 x 288branch1x1 = conv2d_bn(x, 64, 1, 1)branch5x5 = conv2d_bn(x, 48, 1, 1)branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 64, 1, 1)x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool],axis=3,name='mixed1')# Block1 part3# 35 x 35 x 288 -> 35 x 35 x 288branch1x1 = conv2d_bn(x, 64, 1, 1)branch5x5 = conv2d_bn(x, 48, 1, 1)branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 64, 1, 1)x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool],axis=3,name='mixed2')#================================## Block2 17x17#================================## Block2 part1# 35 x 35 x 288 -> 17 x 17 x 768branch3x3 = conv2d_bn(x, 384, 3, 3, strides=(2, 2), padding='valid')branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3, strides=(2, 2), padding='valid')branch_pool = MaxPooling2D((3, 3), strides=(2, 2))(x)x = layers.concatenate([branch3x3, branch3x3dbl, branch_pool], axis=3, name='mixed3')# Block2 part2# 17 x 17 x 768 -> 17 x 17 x 768branch1x1 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(x, 128, 1, 1)branch7x7 = conv2d_bn(branch7x7, 128, 1, 7)branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)branch7x7dbl = conv2d_bn(x, 128, 1, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 1, 7)branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool],axis=3,name='mixed4')# Block2 part3 and part4# 17 x 17 x 768 -> 17 x 17 x 768 -> 17 x 17 x 768for i in range(2):branch1x1 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(x, 160, 1, 1)branch7x7 = conv2d_bn(branch7x7, 160, 1, 7)branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)branch7x7dbl = conv2d_bn(x, 160, 1, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 1, 7)branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool],axis=3,name='mixed' + str(5 + i))# Block2 part5# 17 x 17 x 768 -> 17 x 17 x 768branch1x1 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(branch7x7, 192, 1, 7)branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)branch7x7dbl = conv2d_bn(x, 192, 1, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool],axis=3,name='mixed7')#================================## Block3 8x8#================================## Block3 part1# 17 x 17 x 768 -> 8 x 8 x 1280branch3x3 = conv2d_bn(x, 192, 1, 1)branch3x3 = conv2d_bn(branch3x3, 320, 3, 3,strides=(2, 2), padding='valid')branch7x7x3 = conv2d_bn(x, 192, 1, 1)branch7x7x3 = conv2d_bn(branch7x7x3, 192, 1, 7)branch7x7x3 = conv2d_bn(branch7x7x3, 192, 7, 1)branch7x7x3 = conv2d_bn(branch7x7x3, 192, 3, 3, strides=(2, 2), padding='valid')branch_pool = MaxPooling2D((3, 3), strides=(2, 2))(x)x = layers.concatenate([branch3x3, branch7x7x3, branch_pool], axis=3, name='mixed8')# Block3 part2 part3# 8 x 8 x 1280 -> 8 x 8 x 2048 -> 8 x 8 x 2048for i in range(2):branch1x1 = conv2d_bn(x, 320, 1, 1)branch3x3 = conv2d_bn(x, 384, 1, 1)branch3x3_1 = conv2d_bn(branch3x3, 384, 1, 3)branch3x3_2 = conv2d_bn(branch3x3, 384, 3, 1)branch3x3 = layers.concatenate([branch3x3_1, branch3x3_2], axis=3, name='mixed9_' + str(i))branch3x3dbl = conv2d_bn(x, 448, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 384, 3, 3)branch3x3dbl_1 = conv2d_bn(branch3x3dbl, 384, 1, 3)branch3x3dbl_2 = conv2d_bn(branch3x3dbl, 384, 3, 1)branch3x3dbl = layers.concatenate([branch3x3dbl_1, branch3x3dbl_2], axis=3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch3x3, branch3x3dbl, branch_pool],axis=3,name='mixed' + str(9 + i))# 平均池化后全连接。x = GlobalAveragePooling2D(name='avg_pool')(x)x = Dense(classes, activation='softmax', name='predictions')(x)inputs = img_inputmodel = Model(inputs, x, name='inception_v3')return modelmodel = InceptionV3()

model.summary()

2.官方模型

# import tensorflow as tf# model_2 = tf.keras.applications.InceptionV3()

# model_2.summary()

五、编译

在准备对模型进行训练之前,还需要再对其进行一些设置。以下内容是在模型的编译步骤中添加的:

- 损失函数(loss):用于衡量模型在训练期间的准确率。

- 优化器(optimizer):决定模型如何根据其看到的数据和自身的损失函数进行更新。

- 指标(metrics):用于监控训练和测试步骤。以下示例使用了准确率,即被正确分类的图像的比率。

# 设置优化器,我这里改变了学习率。

opt = tf.keras.optimizers.Adam(learning_rate=1e-5)model.compile(optimizer=opt,loss='sparse_categorical_crossentropy',metrics=['accuracy'])

六、训练模型

epochs = 10history = model.fit(train_ds,validation_data=val_ds,epochs=epochs

)

Epoch 1/10

1255/1255 [==============================] - 146s 77ms/step - loss: 3.9494 - accuracy: 0.3102 - val_loss: 0.6095 - val_accuracy: 0.8481

Epoch 2/10

1255/1255 [==============================] - 70s 56ms/step - loss: 0.7071 - accuracy: 0.8370 - val_loss: 0.1968 - val_accuracy: 0.9430

Epoch 3/10

1255/1255 [==============================] - 70s 56ms/step - loss: 0.2956 - accuracy: 0.9380 - val_loss: 0.0834 - val_accuracy: 0.9757

Epoch 4/10

1255/1255 [==============================] - 70s 56ms/step - loss: 0.1344 - accuracy: 0.9766 - val_loss: 0.0452 - val_accuracy: 0.9884

Epoch 5/10

1255/1255 [==============================] - 71s 57ms/step - loss: 0.0566 - accuracy: 0.9954 - val_loss: 0.0265 - val_accuracy: 0.9916

Epoch 6/10

1255/1255 [==============================] - 72s 57ms/step - loss: 0.0282 - accuracy: 0.9988 - val_loss: 0.0158 - val_accuracy: 0.9956

Epoch 7/10

1255/1255 [==============================] - 72s 57ms/step - loss: 0.0150 - accuracy: 0.9994 - val_loss: 0.0218 - val_accuracy: 0.9924

Epoch 8/10

1255/1255 [==============================] - 72s 57ms/step - loss: 0.0188 - accuracy: 0.9979 - val_loss: 0.0125 - val_accuracy: 0.9968

Epoch 9/10

1255/1255 [==============================] - 71s 57ms/step - loss: 0.0122 - accuracy: 0.9986 - val_loss: 0.0542 - val_accuracy: 0.9833

Epoch 10/10

1255/1255 [==============================] - 70s 56ms/step - loss: 0.0178 - accuracy: 0.9964 - val_loss: 0.0213 - val_accuracy: 0.9924

七、模型评估

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']loss = history.history['loss']

val_loss = history.history['val_loss']epochs_range = range(epochs)plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.suptitle("微信公众号(K同学啊)中回复(DL+13)可获取数据")plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

二、构建一个tf.data.Dataset

1.预处理函数

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']loss = history.history['loss']

val_loss = history.history['val_loss']epochs_range = range(epochs)plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

七、保存和加载模型

# 保存模型

model.save('model/12_model.h5')

# 加载模型

new_model = tf.keras.models.load_model('model/12_model.h5')

八、预测

# 采用加载的模型(new_model)来看预测结果plt.figure(figsize=(10, 5)) # 图形的宽为10高为5for images, labels in val_ds.take(1):for i in range(8):ax = plt.subplot(2, 4, i + 1) # 显示图片plt.imshow(images[i].numpy().astype("uint8"))# 需要给图片增加一个维度img_array = tf.expand_dims(images[i], 0) # 使用模型预测图片中的人物predictions = new_model.predict(img_array)plt.title(class_names[np.argmax(predictions)])plt.axis("off")